| |

G22-3033-002, Fall 2004:

Machine Learning and Pattern Recognition

[ Course Homepage | Schedule and Course Material | Mailing List ]

|

Graduate Course on machine learning, pattern recognition, neural nets, statistical modeling. Instructor: Yann LeCun, 715 Broadway, Room 1220, x83283, yann [ a t ] cs.nyu.edu (note: new room number). Teaching Assistant: Sumit Chopra, Warren Weaver Hall, Room 1106, x8-3242, sumit [ a t ] cs.nyu.edu. Classes: Tuesdays 5:00-6:50PM, Room 102, Warren Weaver Hall. Office Hours for Prof. LeCun: Wednesdays 2:00-4:00 PM Office Hours for the TA: Wednesdays 5:00-7:00 PM

This course will be an updated version of G22-3033-014 taught in Spring 2004. This course will cover a wide variety of topics in machine learning, pattern recognition, statistical modeling, and neural computation. The course will cover the mathematical methods and theoretical aspects, but will primarily focus on algorithmic and practical issues. Machine Learning and Pattern Recognition methods are at the core of many recent advances in "intelligent computing". Current applications include machine perception (vision, audition, speech recognition), control (process control, robotics), data mining, time-series prediction (e.g. in finance), natural language processing, text mining and text classification, bio-informatics, neural modeling, computational models of biological processes, and many other areas.

This course can be useful all graduate students who would want to use or develop statistical modeling methods. This includes students in CS (AI, Vision, Graphics), Math (System Modeling), CNS (Computational Neuroscience, Brain Imaging), Finance (Financial modeling and prediction), Psychology (Vision, Linguistics), Biology (Computational Biology, Genomics, Bio-informatics), and Medicine (Bio-Statistics, Epidemiology).

The topics studied in the course include:

This course will be a (much updated) re-run of G22.3033.014 taught in Spring 2004. Please visit the site of the Spring 2004 edition of this course to have a look at the schedule and source material.

The best way (some would say the only way) to understand an algorithm is to implement it and apply it. Building working systems is also a lot more fun, more creative, and more relevant than taking formal exams. Therefore students will be evaluated primarily (almost exclusively) on programming projects given on a 2 week cycle, and on a final project.

Linear algebra, vector calculus, elementary statistics and probability theory. Good programming ability is a must: most assignements will consist in implementing algorithms studied in class. The course will include a short tutorial on the Lush language, a simple interpreted language for numerical applications. Lush can be downloaded and installed on Linux, Mac, and Windows (under Cygwin). See Chris Poultney's notes on installing Lush under Cygwin. Lush is available on the CIMS Sun workstations available for student use. Programming projects may be implemented in any language, (C, C++, Java, Matlab, Lisp, Python,...) but the use of a high-level interpreted language with good numerical support and and good support for vector/matrix algebra is highly recommended (Lush, Matlab, Octave...). Some assignments require the use of an object-oriented language. Also, for most assignments, a code squeleton in Lush will be provided.

Register to the course's mailing list.

Richard O. Duda, Peter E. Hart, David G. Stork: "Pattern Classification" Wiley-Interscience; 2nd edition, October 2000. I will not follow this book very closely. In particular, much of the material covered in the second half of the course cannot be found in the above book. I will refer to research papers and lectures notes for those topics. Either one of the following books is also recommended, but not absolutely required (you can get a copy from the library):

Other Books of Interest

Code

Lush is installed on the department's PCs. It will soon be available on the Sun network as well.

PapersSome of those papers are available in the DjVu format. The viewer/plugins for Windows, Linux, Mac, and various Unix flavors are available here.

Publications, Journals

Conference Sites

Datasets

Demo of Convolutional Nets for handwriting recognition. More demos are available here.

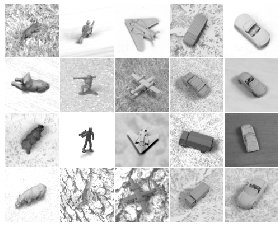

Object Recognition with Convolutional Neural Nets

|

|||||||||||