Determining the next lexeme often requires reading the input beyond the end of that lexeme. For example, to determine the end of an identifier normally requires reading the first whitespace character after it. Also just reading > does not determine the lexeme as it could also be >=. When you determine the current lexeme, the characters you read beyond it may need to be read again to determine the next lexeme.

The book illustrates the standard programming technique of using two (sizable) buffers to solve this problem.

A useful programming improvement to combine testing for the end of a buffer with determining the character read.

The chapter turns formal and, in some sense, the course begins.

The book is fairly careful about finite vs infinite sets and also uses

(without a definition!) the notion of a countable set.

(A countable set is either a finite set or one whose elements can be

put into one to one correspondence with the positive integers.

That is, it is a set whose elements can be counted.

The set of rational numbers, i.e., fractions in lowest terms, is

countable;

the set of real numbers is uncountable, because it is strictly

bigger, i.e., it cannot be counted.)

We should be careful to distinguish the empty set φ from the

empty string ε.

Formal language theory is a beautiful subject, but I shall suppress

my urge to do it right

and try to go easy on the formalism.

We will need a bunch of definitions.

Definition: An alphabet is a finite set of symbols.

Example: {0,1}, presumably φ (uninteresting), ascii, unicode, ebcdic, latin-1.

Definition: A string over an alphabet is a finite sequence of symbols from that alphabet. Strings are often called words or sentences.

Example: Strings over {0,1}: ε, 0, 1, 111010. Strings over ascii: ε, sysy, the string consisting of 3 blanks.

Definition: The length of a string is the number of symbols (counting duplicates) in the string.

Example: The length of allan, written |allan|, is 5.

Definition: A language over an alphabet is a countable set of strings over the alphabet.

Example: All grammatical English sentences with five, eight, or twelve words is a language over ascii. It is also a language over unicode.

Definition: The concatenation of strings s and t is the string formed by appending the string t to s. It is written st.

Example: εs = sε = s for any string s.

We view concatenation as a product (see Monoid in wikipedia http://en.wikipedia.org/wiki/Monoid). It is thus natural to define s0=ε and si+1=sis.

Example: s1=s, s4=ssss.

A prefix of a string is a portion starting from the beginning and a suffix is a portion ending at the end. More formally,

Definitions: A prefix of s is any string obtained from s by removing (possibly zero) characters from the end of s.

A suffix is defined analogously and a substring of s is obtained by deleting a prefix and a suffix.

Example: If s is 123abc, then

(1) s itself and ε are each a prefix, suffix, and a substring.

(2) 12 are 123a are prefixes.

(3) 3abc is a suffix.

(4) 23a is a substring.

Definitions: A proper prefix of s is a prefix of s other than ε and s itself. Similarly, proper suffixes and proper substrings of s do not include ε and s.

Definition: A subsequence of s is formed by deleting (possibly) positions from s. We say positions rather than characters since s may for example contain 5 occurrences of the character Q and we only want to delete a certain 3 of them.

Example: issssii is a subsequence of Mississippi.

Homework: 3.1b, 3.5 (c and e are optional).

Definition: The union of L1 and L2 is simply the set-theoretic union, i.e., it consists of all words (strings) in either L1 or L2.

Example: The union of {Grammatical English sentences with one, three, or five words} with {Grammatical English sentences with two or four words} is {Grammatical English sentences with five or fewer words}.

Definition: The concatenation of L1 and L2 is the set of all strings st, where s is a string of L1 and t is a string of L2.

We again view concatenation as a product and write LM for the concatenation of L and M.

Examples:: The concatenation of {a,b,c} and {1,2} is {a1,a2,b1,b2,c1,c2}. The concatenation of {a,b,c} and {1,2,ε} is {a1,a2,b1,b2,c1,c2,a,b,c}.

Definition: As with strings, it is natural to

define powers of a language L.

L0={ε}, which is not φ.

Li+1=LiL.

Definition: The (Kleene) closure of L,

denoted L* is

L0 ∪ L1 ∪ L2 ...

Definition: The positive closure of L,

denoted L+ is

L1 ∪ L2 ...

Example: {0,1,2,3,4,5,6,7,8,9}+ gives

all unsigned integers, but with some ugly versions.

It has 3, 03, 000003.

{0} ∪ ( {1,2,3,4,5,6,7,8,9} ({0,1,2,3,4,5,6,7,8,9,0}* ) )

seems better.

In these notes I may write * for * and + for +, but that is strictly speaking wrong and I will not do it on the board or on exams or on lab assignments.

Example: {a,b}* is

{ε,a,b,aa,ab,ba,bb,aaa,aab,aba,abb,baa,bab,bba,bbb,...}.

{a,b}+ is {a,b,aa,ab,ba,bb,aaa,aab,aba,abb,baa,bab,bba,bbb,...}.

{ε,a,b}* is {ε,a,b,aa,ab,ba,bb,...}.

{ε,a,b}+ is the same as {ε,a,b}*.

The book gives other examples based on L={letters} and D={digits}, which you should read..

The idea is that the regular expressions over an alphabet consist

of

ε, the alphabet, and expressions using union, concatenation, and *,

but it takes more words to say it right.

For example, I didn't include ().

Note that (A ∪ B)* is definitely not A* ∪ B* (* does not

distribute over ∪) so we

need the parentheses.

The book's definition includes many () and is more complicated than I think is necessary. However, it has the crucial advantages of being correct and precise.

The wikipedia entry doesn't seem to be as precise.

I will try a slightly different approach, but note again that there is nothing wrong with the book's approach (which appears in both first and second editions, essentially unchanged).

Definition: The regular expressions and associated languages over an alphabet consist of

Parentheses, if present, control the order of operations. Without parentheses the following precedence rules apply.

The postfix unary operator * has the highest precedence. The book mentions that it is left associative. (I don't see how a postfix unary operator can be right associative or how a prefix unary operator such as unary - could be left associative.)

Concatenation has the second highest precedence and is left associative.

| has the lowest precedence and is left associative.

The book gives various algebraic laws (e.g., associativity) concerning these operators.

The reason we don't include the positive closure is that for any RE

r+ = rr*.

Homework: 3.6 a and b.

These will look like the productions of a context free grammar we saw previously, but there are differences. Let Σ be an alphabet, then a regular definition is a sequence of definitions

d1 → r1

d2 → r2

...

dn → rn

where the d's are unique and not in Σ andNote that each di can depend on all the previous d's.

Example: C identifiers can be described by the following regular definition

letter_ → A | B | ... | Z | a | b | ... | z | _

digit → 0 | 1 | ... | 9

CId → letter_ ( letter_ | digit)*

Homework: 3.7 a,b (c is optional)

There are many extensions of the basic regular expressions given above. The following three will be frequently used in this course as they are particular useful for lexical analyzers as opposed to text editors or string oriented programming languages, which have more complicated regular expressions.

All three are simply shorthand. That is, the set of possible languages generated using the extensions is the same as the set of possible languages generated without using the extensions.

Examples:

C-language identifiers

letter_ → [A-Za-z_]

digit → [0-9]

CId → letter_ ( letter | digit ) *

Unsigned integer or floating point numbers

digit → [0-9] digits → digit+ number → digits (. digits)?(E[+-]? digits)?

Homework: 3.8 for the C language (you might need to read a C manual first to find out all the numerical constants in C), 3.10a.

Goal is to perform the lexical analysis needed for the following grammar.

stmt → if expr then stmt

| if expr then stmt else stmt

| ε

expr → term relop term // relop is relational operator =, >, etc

| term

term → id

| number

Recall that the terminals are the tokens, the nonterminals produce terminals.

A regular definition for the terminals is

digit → [0-9]

digits → digits+

number → digits (. digits)? (E[+-]? digits)?

letter → [A-Za-z]

id → letter ( letter | digit )*

if → if

then → then

else → else

relop → < | > | <= | >= | = | <>

| Lexeme | Token | Attribute |

|---|---|---|

| Whitespace | ws | — |

| if | if | — |

| then | then | — |

| else | else | — |

| An identifier | id | Pointer to table entry |

| A number | number | Pointer to table entry |

| < | relop | LT |

| <= | relop | LE |

| = | relop | EQ |

| <> | relop | NE |

| > | relop | GT |

| >= | relop | GE |

We also want the lexer to remove whitespace so we define a new token

ws → ( blank | tab | newline ) +where blank, tab, and newline are symbols used to represent the corresponding ascii characters.

Recall that the lexer will be called by the parser when the latter needs a new token. If the lexer then recognizes the token ws, it does not return it to the parser but instead goes on to recognize the next token, which is then returned. Note that you can't have two consecutive ws tokens in the input because, for a given token, the lexer will match the longest lexeme starting at the current position that yields this token. The table on the right summarizes the situation.

For the parser all the relational ops are to be treated the same so

they are all the same token, relop.

Naturally, other parts of the compiler will need to distinguish

between the various relational ops so that appropriate code is

generated.

Hence, they have distinct attribute values.

A transition diagram is similar to a flowchart for (a part of) the lexer. We draw one for each possible token. It shows the decisions that must be made based on the input seen. The two main components are circles representing states (think of them as decision points of the lexer) and arrows representing edges (think of them as the decisions made).

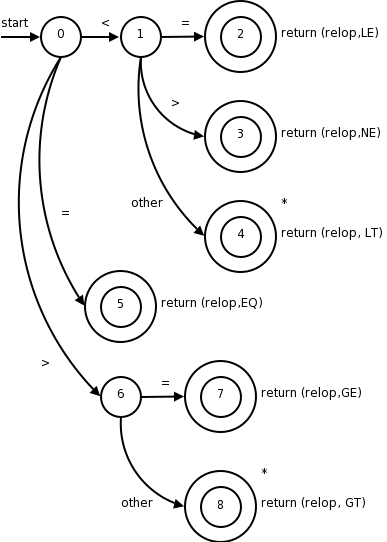

The transition diagram (3.12 in the 1st edition, 3.13 in the second) for relop is shown on the right.

too farin finding the token, one (or more) stars are drawn.

It is fairly clear how to write code corresponding to this diagram. You look at the first character, if it is <, you look at the next character. If that character is =, you return (relop,LE) to the parser. If instead that character is >, you return (relop,NE). If it is another character, return (relop,LT) and adjust the input buffer so that you will read this character again since you have used it for the current lexeme. If the first character was =, you return (relop,EQ).

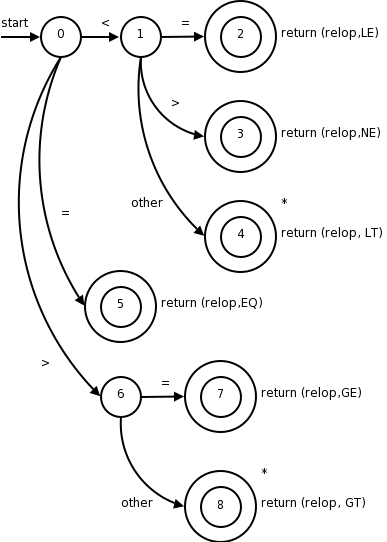

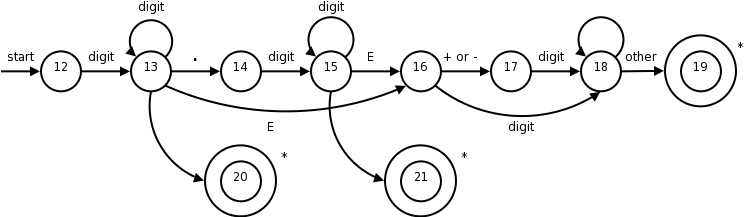

The transition diagram below corresponds to the regular definition given previously.

Note again the star affixed to the final state.

Two questions remain.

then, which also match the pattern in the transition diagram?

We will continue to assume that the keywords are reserved, i.e., may not be used as identifiers. (What if this is not the case—as in Pl/I, which had no reserved words? Then the lexer does not distinguish between keywords and identifiers and the parser must.)

We will use the method mentioned last chapter and have the keywords installed into the symbol table prior to any invocation of the lexer. The symbol table entry will indicate that the entry is a keyword.

installID() checks if the lexeme is already in the table. If it is not present, the lexeme is install as an id token. In either case a pointer to the entry is returned.

gettoken() examines the lexeme and returns the token name, either id or a name corresponding to a reserved keyword.

Both installID() and gettoken() access the buffer to obtain the lexeme of interest

The text also gives another method to distinguish between identifiers and keywords.

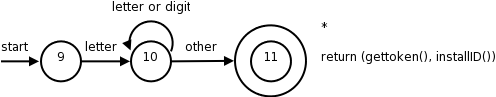

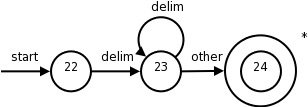

So far we have transition diagrams for identifiers (this diagram also handles keywords) and the relational operators. What remains are whitespace, and numbers, which are the simplest and most complicated diagrams seen so far.

The diagram itself is quite simple reflecting the simplicity of the corresponding regular expression.

delimin the diagram represents any of the whitespace characters, say space, tab, and newline.

The diagram below is from the second edition. It is essentially a combination of the three diagrams in the first edition.

This certainly looks formidable, but it is not that bad; it follows from the regular expression.

In class go over the regular expression and show the corresponding parts in the diagram.

When an accepting states is reached, action is required but is not shown on the diagram. Just as identifiers are stored in a symbol table and a pointer is returned, there is a corresponding number table in which numbers are stored. These numbers are needed when code is generated. Depending on the source language, we may wish to indicate in the table whether this is a real or integer. A similar, but more complicated, transition diagram could be produced if they language permitted complex numbers as well.

Homework: Write transition diagrams for the regular expressions in problems 3.6 a and b, 3.7 a and b.

The idea is that we write a piece of code for each decision diagram. I will show the one for relational operations below (from the 2nd edition). This piece of code contains a case for each state, which typically reads a character and then goes to the next case depending on the character read. The numbers in the circles are the names of the cases.

Accepting states often need to take some action and return to the parser. Many of these accepting states (the ones with stars) need to restore one character of input. This is called retract() in the code.

What should the code for a particular diagram do if at one state the character read is not one of those for which a next state has been defined? That is, what if the character read is not the label of any of the outgoing arcs? This means that we have failed to find the token corresponding to this diagram.

The code calls fail(). This is not an error case. It simply means that the current input does not match this particular token. So we need to go to the code section for another diagram after restoring the input pointer so that we start the next diagram at the point where this failing diagram started. If we have tried all the diagram, then we have a real failure and need to print an error message and perhaps try to repair the input.

Note that the order the diagrams are tried is important. If the input matches more than one token, the first one tried will be chosen.

TOKEN getRelop() // TOKEN has two components

TOKEN retToken = new(RELOP); // First component set here

while (true)

switch(state)

case 0: c = nextChar();

if (c == '<') state = 1;

else if (c == '=') state = 5;

else if (c == '>') state = 6;

else fail();

break;

case 1: ...

...

case 8: retract(); // an accepting state with a star

retToken.attribute = GT; // second component

return(retToken);

The description above corresponds to the one given in the first edition.

The newer edition gives two other methods for combining the multiple transition-diagrams (in addition to the one above).

The newer version, which we will use, is called flex, the f stands for fast. I checked and both lex and flex are on the cs machines. I will use the name lex for both.

Lex is itself a compiler that is used in the construction of other compilers (its output is the lexer for the other compiler). The lex language, i.e, the input language of the lex compiler, is described in the few sections. The compiler writer uses the lex language to specify the tokens of their language as well as the actions to take at each state.

Let us pretend I am writing a compiler for a language called pink. I produce a file, call it lex.l, that describes pink in a manner shown below. I then run the lex compiler (a normal program), giving it lex.l as input. The lex compiler output is always a file called lex.yy.c, a program written in C.

One of the procedures in lex.yy.c (call it pinkLex()) is the lexer itself, which reads a character input stream and produces a sequence of tokens. pinkLex() also sets a global value yylval that is shared with the parser. I then compile lex.yy.c together with a the parser (typically the output of lex's cousin yacc, a parser generator) to produce say pinkfront, which is an executable program that is the front end for my pink compiler.

The general form of a lex program like lex.l is

declarations %% translation rules %% auxiliary functions

The lex program for the example we have been working with follows (it is typed in straight from the book).

%{

/* definitions of manifest constants

LT, LE, EQ, NE, GT, GE,

IF, THEN, ELSE, ID, NUMBER, RELOP */

%}

/* regular definitions */

delim [ \t\n]

ws {delim}*

letter [A-Za-z]

digit [0-9]

id {letter}({letter}{digit})*

number {digit}+(\.{digit}+)?(E[+-]?{digit}+)?

%%

{ws} {/* no action and no return */}

if {return(IF);}

then {return(THEN);}

else {return(ELSE);}

{id} {yylval = (int) installID(); return(ID);}

{number} {yylval = (int) installNum(); return(NUMBER);}

"<" {yylval = LT; return(RELOP);}

"<=" {yylval = LE; return(RELOP);}

"=" {yylval = EQ; return(RELOP);}

"<>" {yylval = NE; return(RELOP);}

">" {yylval = GT; return(RELOP);}

">=" {yylval = GE; return(RELOP);}

%%

int installID() {/* function to install the lexeme, whose first character

is pointed to by yytext, and whose length is yyleng,

into the symbol table and return a pointer thereto */

}

int installNum() {/* similar to installID, but puts numerical constants

into a separate table */

The first, declaration, section includes variables and constants as well as the all-important regular definitions that define the building blocks of the target language, i.e., the language that the generated lexer will analyze.

The next, translation rules, section gives the patterns of the lexemes that the lexer will recognize and the actions to be performed upon recognition. Normally, these actions include returning a token name to the parser and often returning other information about the token via the shared variable yylval.

If a return is not specified the lexer continues executing and finds the next lexeme present.

Anything between %{ and %} is not processed by lex, but instead is copied directly to lex.yy.c. So we could have had statements like

#define LT 12 #define LE 13

The regular definitions are mostly self explanatory. When a definition is later used it is surrounded by {}. A backslash \ is used when a special symbol like * or . is to be used to stand for itself, e.g. if we wanted to match a literal star in the input for multiplication.

Each rule is fairly clear: when a lexeme is matched by the left, pattern, part of the rule, the right, action, part is executed. Note that the value returned is the name (an integer) of the corresponding token. For simple tokens like the one named IF, which correspond to only one lexeme, no further data need be sent to the parser. There are several relational operators so a specification of which lexeme matched RELOP is saved in yylval. For id's and numbers's, the lexeme is stored in a table by the install functions and a pointer to the entry is placed in yylval for future use.

Everything in the auxiliary function section is copied directly to lex.yy.c. Unlike declarations enclosed in %{ %}, however, auxiliary functions may be used in the actions

The first rule makes <= one instead of two lexemes.

The second rule makes if

a keyword and not an id.

Sorry.

Sometimes a sequence of characters is only considered a certain

lexeme if the sequence is followed by specified other sequences.

Here is a classic example.

Fortran, PL/I, and some other languages do not have reserved words.

In Fortran

IF(X)=3

is a legal assignment statement and the IF is an identifier.

However,

IF(X.LT.Y)X=Y

is an if/then statement and IF is a keyword.

Sometimes the lack of reserved words makes lexical disambiguation

impossible, however, in this case the slash / operator of lex is

sufficient to distinguish the two cases.

Consider

IF / \(.*\){letter}

This only matches IF when it is followed by a ( some text a ) and a letter. The only FORTRAN statements that match this are the if/then shown above; so we have found a lexeme that matches the if token. However, the lexeme is just the IF and not the rest of the pattern. The slash tells lex to put the rest back into the input and match it for the next and subsequent tokens.

Homework: 3.11.

Homework: Modify the lex program in section 3.5.2 so that: (1) the keyword while is recognized, (2) the comparison operators are those used in the C language, (3) the underscore is permitted as another letter (this problem is easy).

The secret weapon used by lex et al to convert (compile

) its

input into a lexer.

Finite automata are like the graphs we saw in transition diagrams but they simply decide if a sentence (input string) is in the language (generated by our regular expression). That is, they are recognizers of language.

There are two types of finite automata

executionis deterministic; hence the name.

lookaheadsymbol.

Surprising Theorem: Both DFAs and NFAs are capable of recognizing the same languages, the regular languages, i.e., the languages generated by regular expressions (plus the automata can recognize the empty language).

There are certainly NFAs that are not DFAs. But the language recognized by each such NFA can also be recognized by at least one DFA.

The DFA that recognizes the same language as an NFA might be significantly larger that the NFA.

The finite automaton that one constructs naturally from a regular expression is often an NFA.

Here is the formal definition.

A nondeterministic finite automata (NFA) consists of

An NFA is basically a flow chart like the transition diagrams we have already seen. Indeed an NFA (or a DFA, to be formally defined soon) can be represented by a transition graph whose nodes are states and whose edges are labeled with elements of Σ ∪ ε. The differences between a transition graph and our previous transition diagrams are:

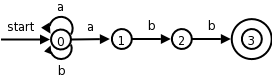

The transition graph to the right is an NFA for the regular expression (a|b)*abb, which (given the alphabet {a,b} represents all words ending in abb.

Consider aababb.

If you choose the wrong

edge for the initial a's you will get

stuck or not end at the accepting state.

But an NFA accepts a word if any path using the symbols in

the word ends at an accepting state.

It essentially tries all of them at once and accepts if any are

successful.

Patterns like (a|b)*abb are useful regular expressions! If the alphabet is ascii, consider *.java.

| State | a | b | ε |

|---|---|---|---|

| 0 | {0,1} | {0} | φ |

| 1 | φ | {2} | φ |

| 2 | φ | {3} | φ |

There is an equivalent way to represent an NFA, namely a table giving, for each state s and input symbol x (and ε), the set of successor states x leads to from s. The empty set φ is used when there is no edge labeled x emanating from s. The table on the right corresponds to the transition graph above.

The downside of these tables is their size, especially if most of the entries are φ since those entries would not take any space in a transition graph.

An NFA accepts a string if the symbols of the string specify a path from the start to an accepting state.

Again note that these symbols may specify several paths, some of which lead to accepting states and some that don't. In such a case the NFA does accept the string; one successful path is enough.

Also note that if an edge is labeled ε, then it can be

taken for free

.

For the transition graph above any string can just sit at state 0 since every possible symbol (namely a or b) can go from state 0 back to state 0. So every string can lead to a non-accepting state, but that is not important since if just one path with that string leads to an accepting state, the NFA accepts the string.

The language defined by an NFA or the language accepted by an NFA is the set of strings (a.k.a. words) accepted by the NFA.

So the NFA in the diagram above accepts the same language as the regular expression (a|b)*abb.

If A is an automaton (NFA or DFA) we use L(A) for the language accepted by A.

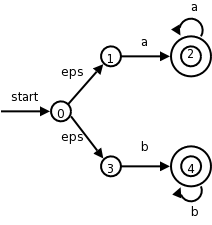

The diagram on the right illustrates an NFA accepting the language

L(aa*|bb*).

The path

0 → 3 → 4 → 4 → 4 → 4

shows that bbbb is accepted by the NFA.

Note how the ε that labels the edge 0 → 3 does not appear in the string bbbb since ε is the empty string.

There is something weird about an NFA if viewed as a model of computation. How is a computer of any realistic construction able to check out all the (possibly infinite number of) paths to determine if any terminate at an accepting state?

We now consider a much more realistic model, a DFA.

Definition: A deterministic finite automata or DFA is a special case of an NFA having the restrictions

This is realistic. We are at a state and examine the next character in the string, depending on the character we go to exactly one new state. Looks like a switch statement to me.

Minor point: when we write a transition table for a DFA, the entries are elements not sets so there are no {} present.

Indeed a DFA is so reasonable there is an obvious algorithm for simulating it (i.e., reading a string and deciding whether or not it is in the language accepted by the DFA). We present it now.

The second edition has switched to C syntax: = is assignment == is comparison. I am going to change to this notation since I strongly suspect that most of the class is much more familiar with C/C++/java/C# than with algol60/algol68/pascal/ada (the last is my personal favorite). As I revisit past sections of the notes to fix errors, I will change the examples from algol to C usage of =. I realize that this makes the notes incompatible with the edition you have, but hope and believe that this will not cause any serious problems.

s = s0; // start state. NOTE = is assignment c = nextChar(); // aprimingread while (c != eof) { s = move(s,c); c = nextChar(); } if (s is in F, the set of accepting states) returnyeselse returnno

This is not from the book.

Do not forget the goal of the chapter is to understand lexical analysis. We saw, when looking at Lex, that regular expressions are a key in this task. So we want to recognize regular expressions (say the ones representing tokens). We are going to see two methods.

So we need to learn 4 techniques.

The list I just gave is in the order the algorithms would be applied—but you would use either 2 or (3 and 4).

The two editions differ in the order the techniques are presented, but neither does it in the order I just gave. Indeed, we just did item #4.

I will follow the order of 2nd ed but give pointers to the first edition where they differ.