Homework solutions posted, give passwd.

TAs assigned

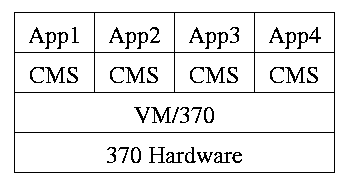

I must note that Tanenbaum is a big advocate of the so called microkernel approach in which as much as possible is moved out of the (supervisor mode) kernel into separate processes. The (hopefully small) portion left in supervisor mode is called a microkernel.

In the early 90s this was popular. Digital Unix (now called True64) and Windows NT/2000/XP are examples. Digital Unix is based on Mach, a research OS from Carnegie Mellon university. Lately, the growing popularity of Linux has called into question the belief that “all new operating systems will be microkernel based”.

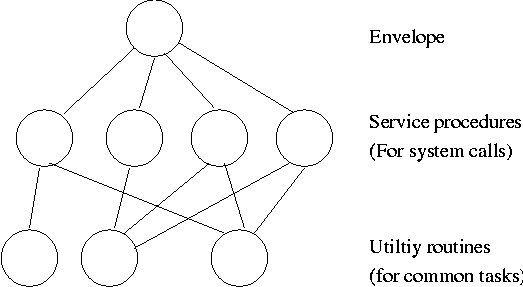

The previous picture: one big program

The system switches from user mode to kernel mode during the poof and then back when the OS does a “return” (an RTI or return from interrupt).

But of course we can structure the system better, which brings us to.

Some systems have more layers and are more strictly structured.

An early layered system was “THE” operating system by Dijkstra. The layers were.

The layering was done by convention, i.e. there was no enforcement by hardware and the entire OS is linked together as one program. This is true of many modern OS systems as well (e.g., linux).

The multics system was layered in a more formal manner. The hardware provided several protection layers and the OS used them. That is, arbitrary code could not jump to or access data in a more protected layer.

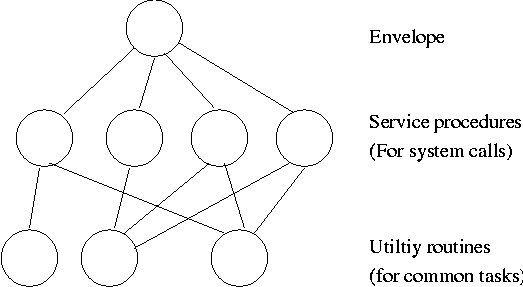

Use a “hypervisor” (beyond supervisor, i.e. beyond a normal OS) to switch between multiple Operating Systems. Made popular by IBM's VM/CMS

Similar to VM/CMS but the virtual machines have disjoint resources (e.g., distinct disk blocks) so less remapping is needed.

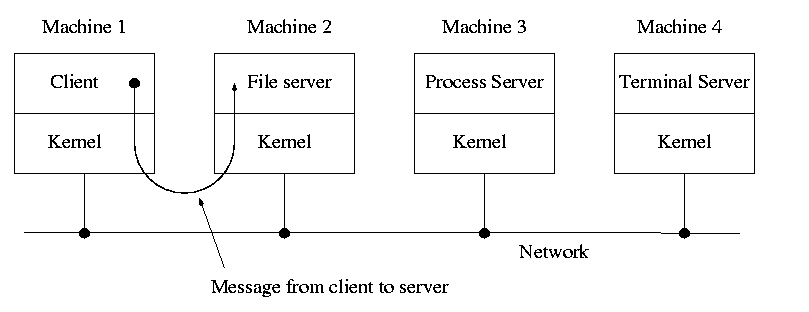

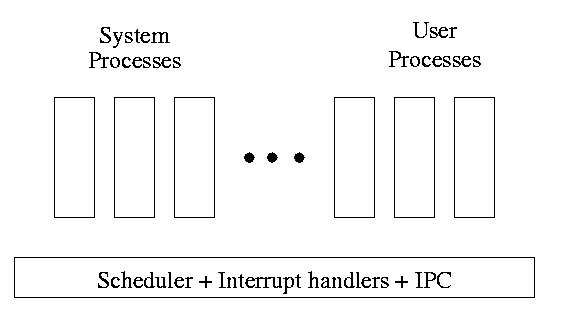

When implemented on one computer, a client server OS uses the microkernel approach in which the microkernel supplies just interprocess communication, and the main OS functions are provided by a number of separate processes.

This does have advantages. For example an error in the file server cannot corrupt memory in the process server. This makes errors easier to track down.

But it does mean that when a (real) user process makes a system call there are more processes switches. These are not free.

A distributed system can be thought of as an extension of the client server concept where the servers are remote.

Homework: 23

Dennis Ritchie, the inventor of the C programming language and co-inventor, with Ken Thompson, of Unix was interviewed in February 2003. The following is from that interview.

What's your opinion on microkernels vs. monolithic?

Dennis Ritchie: They're not all that different when you actually use them. "Micro" kernels tend to be pretty large these days, and "monolithic" kernels with loadable device drivers are taking up more of the advantages claimed for microkernels.

Tanenbaum's chapter title is “Processes and Threads”. I prefer to add the word management. The subject matter is processes, threads, scheduling, interrupt handling, and IPC (InterProcess Communication--and Coordination).

Definition: A process is a program in execution.

Even though in actuality there are many processes running at once, the OS gives each process the illusion that it is running alone.

Think of the individual modules that are input to the linker. Each numbers its addresses from zero; the linker eventually translates these relative addresses into absolute addresses. That is the linker provides to the assembler a virtual memory in which addresses start at zero.

Virtual time and virtual memory are examples of abstractions provided by the operating system to the user processes so that the latter “sees” a more pleasant virtual machine than actually exists.

From the users or external viewpoint there are several mechanisms for creating a process.

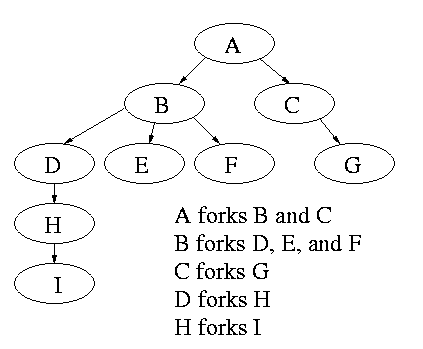

But looked at internally, from the system's viewpoint, the second method dominates. Indeed in unix only one process is created at system initialization (the process is called init); all the others are children of this first process.

Why have init? That is why not have all processes created via

method 2?

Ans: Because without init there would be no running process to create

any others.

Many systems have daemon process that lurking around to perform tasks when they are needed. I was pretty sure the terminology was related to mythology, but didn't have a reference. Chirag Sadana, a student in 202 during 2003-04 spring, found the following reference.

daemon: /day'mn/ or /dee'mn/ n. [from the mythological meaning, later rationalized as the acronym `Disk And Execution MONitor'] A program that is not invoked explicitly, but lies dormant waiting for some condition(s) to occur. The idea is that the perpetrator of the condition need not be aware that a daemon is lurking (though often a program will commit an action only because it knows that it will implicitly invoke a daemon). For example, under {ITS}, writing a file on the LPT spooler's directory would invoke the spooling daemon, which would then print the file. The advantage is that programs wanting (in this example) files printed need neither compete for access to nor understand any idiosyncrasies of the LPT. They simply enter their implicit requests and let the daemon decide what to do with them. Daemons are usually spawned automatically by the system, and may either live forever or be regenerated at intervals. Daemon and demon are often used interchangeably, but seem to have distinct connotations. The term `daemon' was introduced to computing by CTSS people (who pronounced it /dee'mon/) and used it to refer to what ITS called a dragon; the prototype was a program called DAEMON that automatically made tape backups of the file system. Although the meaning and the pronunciation have drifted, we think this glossary reflects current (2000) usage.I found this at the “The {Searchable} Jargon Lexicon:” at http://developer.syndetic.org/query_jargon.pl?term=demon

Again from the outside there appear to be several termination mechanism.

And again, internally the situation is simpler. In Unix terminology, there are two system calls kill and exit that are used. Kill (poorly named in my view) sends a signal to another process. If this signal is not caught (via the signal system call) the process is terminated. There is also an “uncatchable” signal. Exit is used for self termination and can indicate success or failure.

Modern general purpose operating systems permit a user to create and destroy processes.

Old or primitive operating system like

MS-DOS are not multiprogrammed, so when one process starts another,

the first process is automatically blocked and waits until

the second is finished.

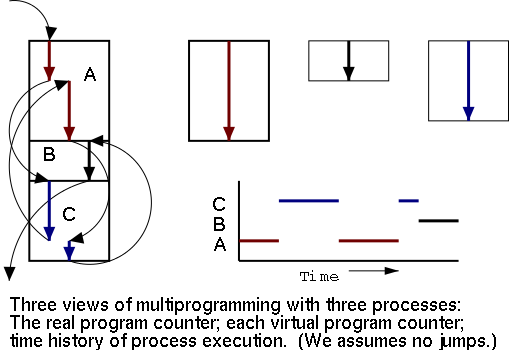

The diagram on the right contains much information.

Homework: 1.

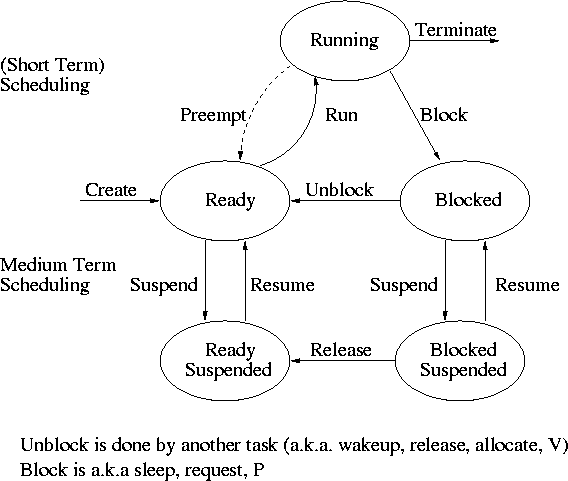

One can organize an OS around the scheduler.

One can organize an OS around the scheduler.

The OS organizes the data about each process in a table naturally called the process table. Each entry in this table is called a process table entry (PTE) or process control block.

In a well defined location in memory (specified by the hardware) the OS stores an interrupt vector, which contains the address of the (first level) interrupt handler.

Assume a process P is running and a disk interrupt occurs for the completion of a disk read previously issued by process Q, which is currently blocked. Note that disk interrupts are unlikely to be for the currently running process (because the process that initiated the disk access is likely blocked).

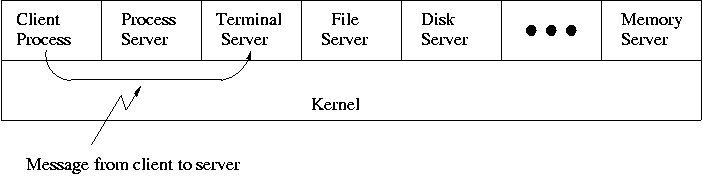

| Per process items | Per thread items |

|---|---|

| Address space | Program counter |

| Global variables | Machine registers |

| Open files | Stack |

| Child processes | |

| Pending alarms | |

| Signals and signal handlers | |

| Accounting information |

The idea is to have separate threads of control (hence the name) running in the same address space. An address space is a memory management concept. For now think of an address space as the memory in which a process runs and the mapping from the virtual addresses (addresses in the program) to the physical addresses (addresses in the machine). Each thread is somewhat like a process (e.g., it is scheduled to run) but contains less state (e.g., the address space belongs to the process in which the thread runs.