================ Start Lecture #5

================

2.4: Process Scheduling

Scheduling processes on the processor is often called ``process

scheduling'' or simply ``scheduling''.

The objectives of a good scheduling policy include

- Fairness.

- Efficiency.

- Low response time (important for interactive jobs).

- Low turnaround time (important for batch jobs).

- High throughput [the above are from Tanenbaum].

- Repeatability. Dartmouth (DTSS) ``wasted cycles'' and limited

logins for repeatability.

- Fair across projects.

- ``Cheating'' in unix by using multiple processes.

- TOPS-10.

- Fair share research project.

- Degrade gracefully under load.

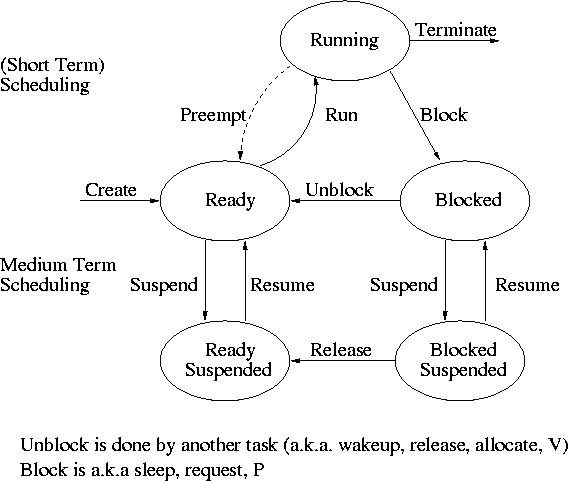

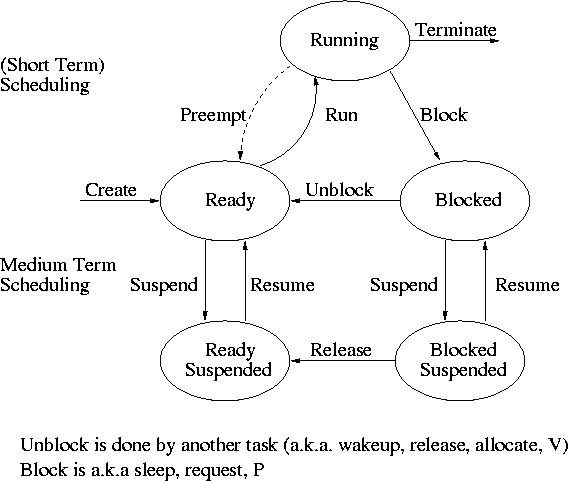

Recall the basic diagram describing process states

For now we are discussing short-term scheduling, i.e., the arcs

connecting running <--> ready.

Medium term scheduling is discussed later.

Preemption

It is important to distinguish preemptive from non-preemptive

scheduling algorithms.

- Preemption means the operating system moves a process from running

to ready without the process requesting it.

- Without preemption, the system implements ``run to completion (or

yield or block)''.

- The ``preempt'' arc in the diagram.

- We do not consider yield (a solid arrow from running to ready).

- Preemption needs a clock interrupt (or equivalent).

- Preemption is needed to guarantee fairness.

- Found in all modern general purpose operating systems.

- Even non preemptive systems can be multiprogrammed (e.g., when processes

block for I/O).

Deadline scheduling

This is used for real time systems. The objective of the scheduler is

to find a schedule for all the tasks (there are a fixed set of tasks)

so that each meets its deadline. The run time of each task is known

in advance.

Actually it is more complicated.

- Periodic tasks

- What if we can't schedule all task so that each meets its deadline

(i.e., what should be the penalty function)?

- What if the run-time is not constant but has a known probability

distribution?

We do not cover deadline scheduling in this course.

The name game

There is an amazing inconsistency in naming the different

(short-term) scheduling algorithms. Over the years I have used

primarily 4 books: In chronological order they are Finkel, Deitel,

Silberschatz, and Tanenbaum. The table just below illustrates the

name game for these four books. After the table we discuss each

scheduling policy in turn.

Finkel Deitel Silbershatz Tanenbaum

-------------------------------------

FCFS FIFO FCFS -- unnamed in tanenbaum

RR RR RR RR

PS ** PS PS

SRR ** SRR ** not in tanenbaum

SPN SJF SJF SJF

PSPN SRT PSJF/SRTF -- unnamed in tanenbaum

HPRN HRN ** ** not in tanenbaum

** ** MLQ ** only in silbershatz

FB MLFQ MLFQ MQ

First Come First Served (FCFS, FIFO, FCFS, --)

If the OS ``doesn't'' schedule, it still needs to store the PTEs

somewhere. If it is a queue you get FCFS. If it is a stack

(strange), you get LCFS. Perhaps you could get some sort of random

policy as well.

- Only FCFS is considered.

- The simplist scheduling policy.

- Non-preemptive.

Round Robbin (RR, RR, RR, RR)

- An important preemptive policy.

- Essentially the preemptive version of FCFS.

- The key parameter is the quantum size q.

- When a process is put into the running state a timer is set to q.

- If the timer goes off and the process is still running, the OS

preempts the process.

- This process is moved to the ready state (the

preempt arc in the diagram), where it is placed at the

rear of the ready list (a queue).

- The process at the front of the ready list is removed from

the ready list and run (i.e., moves to state running).

- When a process is created, it is placed at the rear of the ready list.

- As q gets large, RR approaches FCFS

- As q gets small, RR approaches PS (Processor Sharing, described next)

- What value of q should we choose?

- Tradeoff

- Small q makes system more responsive.

- Large q makes system more efficient since less process switching.

Homework: 26, 35, 38.

Homework: Give an argument favoring a large

quantum; give an argument favoring a small quantum.

| Process | CPU Time | Creation Time |

|---|

| P1 | 20 | 0 |

| P2 | 3 | 3 |

| P3 | 2 | 5 |

Homework:

(Remind me to discuss this last one in class next time):

Consider the set of processes in the table to the right.

When does each process finish if RR scheduling is used with q=1, if

q=2, if q=3, if q=100. First assume (unrealistically) that context

switch time is zero. Then assume it is .1.

Each process performs no

I/O (i.e., no process ever blocks). All times are in milliseconds.

The CPU time is the total time required for the process (excluding

context switch time). The creation

time is the time when the process is created. So P1 is created when

the problem begins and P3 is created 5 miliseconds later.

Processor Sharing (PS, **, PS, PS)

Merge the ready and running states and permit all ready jobs to be run

at once. However, the processor slows down so that when n jobs are

running at once each progresses at a speed 1/n as fast as it would if

it were running alone.

- Clearly impossible as stated due to the overhead of process

switching.

- Of theoretical interest (easy to analyze).

- Approximated by RR when the quantum is small. Make

sure you understand this last point. For example,

consider the last homework assignment (with zero context switch time)

and consider q=1, q=.1, q=.01, etc.

Homework: 34.

Variants of Round Robbin

- State dependent RR

- Same as RR but q is varied dynamically depending on the state

of the system.

- Favor processes holding important resources.

- For example, non-swappable memory.

- Perhaps this should be considered medium term scheduling

since you probably do not recalculate q each time.

- External priorities: RR but a user can pay more and get

bigger q. That is one process can be given a higher priority than

another. But this is not an absolute priority, i.e., the lower priority

(i.e., less important) process does get to run, but not as much as the

high priority process.

Priority Scheduling

Each job is assigned a priority (externally, perhaps by charging

more for higher priority) and the highest priority ready job is run.

- Similar to ``External priorities'' above

- If many processes have the highest priority, use RR among them.

- Can easily starve processes (see aging below for fix).

- Can have the priorities changed dynamically to favor processes

holding important resources (similar to state dependent RR).

- Many policies can be thought of as priority scheduling in

which we run the job with the highest priority (with different notions

of priority for different policies).

Priority aging

As a job is waiting, raise its priority so eventually it will have the

maximum priority.

- This prevents starvation (assuming all jobs terminate).

policy is preemptive).

- There may be many processes with the maximum priority.

- If so, can use fifo among those with max priority (risks

starvation if a job doesn't terminate) or can use RR.

- Can apply priority aging to many policies, in particular to priority

scheduling described above.

Selfish RR (SRR, **, SRR, **)

- Preemptive.

- Perhaps it should be called ``snobbish RR''.

- ``Accepted processes'' run RR.

- Accepted process have their priority increase at rate b>=0.

- A new process starts at priority 0; its priority increases at rate a>=0.

- A new process becomes an accepted process when its priority

reaches that of an accepted process (or until there are no accepted

processes).

- Note that at any time all accepted processes have same priority.

- If b>=a, get FCFS.

- If b=0, get RR.

- If a>b>0, it is interesting.

Shortest Job First (SPN, SJF, SJF, SJF)

Sort jobs by total execution time needed and run the shortest first.

- Nonpreemptive

- First consider a static situation where all jobs are available in

the beginning, we know how long each one takes to run, and we

implement ``run-to-completion'' (i.e., we don't even switch to another

process on I/O). In this situation, SJF has the shortest average

waiting time

- Assume you have a schedule with a long job right before a

short job.

- Consider swapping the two jobs.

- This decreases the wait for

the short by the length of the long job and increases the wait of the

long job by the length of the short job.

- This decreases the total waiting time for these two.

- Hence decreases the total waiting for all jobs and hence decreases

the average waiting time as well.

- Hence, whenever a long job is right before a short job, we can

swap them and decrease the average waiting time.

- Thus the lowest average waiting time occurs when there are no

short jobs right before long jobs.

- This is SJF.

- In the more realistic case where the scheduler switches to a new

process when the currently running process blocks (say for I/O), we

should call the policy shortest next-CPU-burst first.

- The difficulty is predicting the future (i.e., knowing in advance

the time required for the job or next-CPU-burst).

- This is an example of priority scheduling.

Homework: 39, 40.

Preemptive Shortest Job First (PSPN, SRT, PSJF/SRTF, --)

Preemptive version of above

- Permit a process that enters the ready list to preempt the running

process if the time for the new process (or for its next burst) is

less than the remaining time for the running process (or for

its current burst).

- It will never happen that a process in the ready list

will require less time than the remaining time for the currently

running process. Why?

Ans: When the process joined the ready list it would have started

running if the current process had more time remaining. Since

that didn't happen the current job had less time remaining and now

it has even less.

- Can starve processs that require a long burst.

- This is fixed by the standard technique.

- What is that technique?

Ans: Priority aging.

- Another example of priority scheduling.