================ Start Lecture #9

================

Notes on lab (scheduling)

- If several processes are waiting on I/O, you may assume

noninterference. For example, assume that on cycle 100 process A

flips a coin and decides its wait is 6 units (i.e., during cycles

101-106 A will be blocked. Assume B begins running at cycle 101 for a

burst of 1 cycle. So during 101

process B flips a coin and decides its wait is 3 units. You do NOT

have to alter process A. That is, Process A will become ready after

cycle 106 (100+6) so enters the ready list cycle 107 and process B

becomes ready after cycle 104 (101+3) and enters ready list cycle

105.

- For processor sharing (PS), which is part of the extra credit:

PS (processor sharing). Every cycle you see how many jobs are

in the ready Q. Say there are 7. Then during this cycle (an exception

will be described below) each process gets 1/7 of a cycle.

EXCEPTION: Assume there are exactly 2 jobs in RQ, one needs 1/3 cycle

and one needs 1/2 cycle. The process needing only 1/3 gets only 1/3,

i.e. it is finished after 2/3 cycle. So the other process gets 1/3

cycle during the first 2/3 cycle and then starts to get all the CPU.

Hence it finishes after 2/3 + 1/6 = 5/6 cycle. The last 1/6 cycle is

not used by any process.

Chapter 3: Memory Management

Also called storage management or

space management.

Memory management must deal with the storage

hierarchy present in modern machines.

- Registers, cache, central memory, disk, tape (backup)

- Move data from level to level of the hierarchy.

- How should we decide when to move data up to a higher level?

- Fetch on demand (e.g. demand paging, which is dominant now).

- Prefetch

- Read-ahead for file I/O.

- Large cache lines and pages.

- Extreme example. Entire job present whenever running.

We will see in the next few lectures that there are three independent

decision:

- Segmentation (or no segmentation)

- Paging (or no paging)

- Fetch on demand (or no fetching on demand)

Memory management implements address translation.

- Convert virtual addresses to physical addresses

- Also called logical to real address translation.

- A virtual address is the address expressed in

the program.

- A physical address is the address understood

by the computer hardware.

- The translation from virtual to physical addresses is performed by

the Memory Management Unit or (MMU).

- Another example of address translation is the conversion of

relative addresses to absolute addresses by the linker.

- The translation might be trivial (e.g., the identity) but not in a modern

general purpose OS.

- The translation might be difficult (i.e., slow).

- Often includes addition/shifts/mask--not too bad.

- Often includes memory references.

- VERY serious.

- Solution is to cache translations in a Translation

Lookaside Buffer (TLB). Sometimes called a

translation buffer (TB).

Homework: 7.

When is address translation performed?

- At compile time

- Compiler generates physical addresses.

- Requires knowledge of where the compilation unit will be

loaded.

- No linker.

- Loader is trivial.

- Primitive.

- Rarely used (MSDOS .COM files).

- At link-edit time (the ``linker lab'')

- Compiler

- Generates relocatable addresses for each compilation unit.

- References external addresses.

- Linkage editor

- Converts the relocatable addr to absolute.

- Resolves external references.

- Misnamed ld by unix.

- Also converts virtual to physical addresses by knowing where the

linked program will be loaded. Linker lab ``does'' this, but

it is trivial since we assume the linked program will be

loaded at 0.

- Loader is still trivial.

- Hardware requirements are small.

- A program can be loaded only where specified and

cannot move once loaded.

- Not used much any more.

- At load time

- Similar to at link-edit time, but do not fix

the starting address.

- Program can be loaded anywhere.

- Program can move but cannot be split.

- Need modest hardware: base/limit registers.

- Loader sets the base/limit registers.

- At execution time

- Addresses translated dynamically during execution.

- Hardware needed to perform the virtual to physical address

translation quickly.

- Currently dominates.

- Much more information later.

Extensions

- Dynamic Loading

- When executing a call, check if module is loaded.

- If not loaded, call linking loader to load it and update

tables.

- Slows down calls (indirection) unless you rewrite code

dynamically.

- Not used much.

- Dynamic Linking

- The traditional linking described above is today often called

static linking.

- With dynamic linking, frequently used routines are not linked

into the program. Instead, just a stub is linked.

- When the routine is called, the stub checks to see if the

real routine is loaded (it may have been loaded by

another program).

- If not loaded, load it.

- If already loaded, share it. This needs some OS

help so that different jobs sharing the library don't

overwrite each other's private memory.

- Advantages of dynamic linking.

- Saves space: Routine only in memory once even when used

many times.

- Bug fix to dynamically linked library fixes all applications

that use that library, without having to

relink the application.

- Disadvantages of dynamic linking.

- New bugs in dynamically linked library infect all

applications.

- Applications ``change'' even when they haven't changed.

Note: I will place ** before each memory management

scheme.

3.1: Memory management without swapping or paging

Entire process remains in memory from start to finish and does not move.

The sum of the memory requirements of all jobs in the system cannot

exceed the size of physical memory.

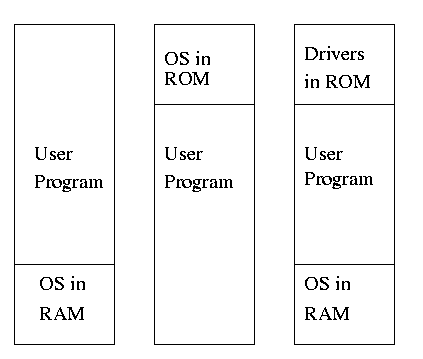

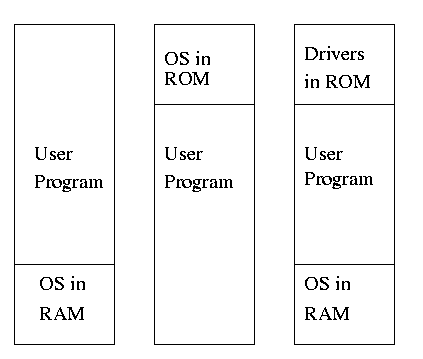

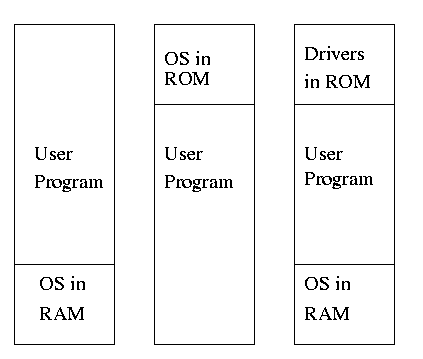

** 3.1.1: Monoprogramming without swapping or paging (Single User)

The ``good old days'' when everything was easy.

- No address translation done by the OS (i.e., address translation is

not performed dynamically during execution).

- Either reload the OS for each job (or don't have an OS, which is almost

the same), or protect the OS from the job.

- One way to protect (part of) the OS is to have it in ROM.

- Of course, must have the OS (read-write) data in ram.

- Can have a separate OS address space only accessible in

supervisor mode.

- Might just put some drivers in ROM (BIOS).

- The user employs overlays if the memory needed

by a job exceeds the size of physical memory.

- Programmer breaks program into pieces.

- A ``root'' piece is always memory resident.

- The root contains calls to load and unload various pieces.

- Programmer's responsibility to ensure that a piece is already

loaded when it is called.

- No longer used, but we couldn't have gotten to the moon in the

60s without it (I think).

- Overlays have been replaced by dynamic address translation and

other features (e.g., demand paging) that have the system support

logical address sizes greater than physical address sizes.

- Fred Brooks (leader of IBM's OS/360 project and author of ``The

mythical man month'') remarked that the OS/360 linkage editor was

terrific, especially in its support for overlays, but by the time

it came out, overlays were no longer used.

3.1.2: Multiprogramming and Memory Usage

Goal is to improve CPU utilization, by overlapping CPU and I/O

- Consider a job that is unable to compute (i.e., it is waiting for

I/O) a fraction p of the time.

- Then, with monoprogramming, the CPU utilization is 1-p.

- Note that p is often > .5 so CPU utilization is poor.

- But, if the probability that a

job is waiting for I/O is p and n jobs are in memory, then the

probability that all n are waiting for I/O is approximately p^n.

- So, with a multiprogramming level (MPL) of n,

the CPU utilization is approximately 1-p^n.

- If p=.5 and n=4, then 1-p^n = 15/16, which is much better than

1/2, which would occur for monoprogramming (n=1).

- This is a crude model, but it is correct that increasing MPL does

increase CPU utilization up to a point.

- The limitation is memory, which is why we discuss it here

instead of process management. That is, we must have many jobs

loaded at once, which means we must have enough memory for them

There are other issues as well and we will discuss them.

- Some of the CPU utilization is time spent in the OS executing

context switches so the gains are not a great as the crude model predicts.

Homework: 1, 3.

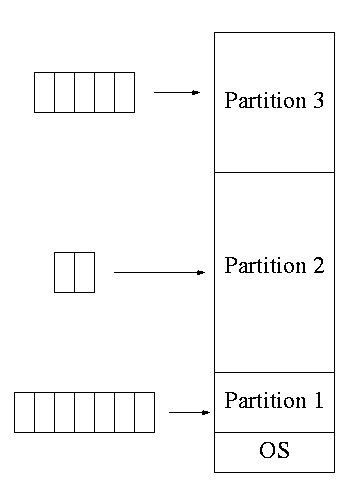

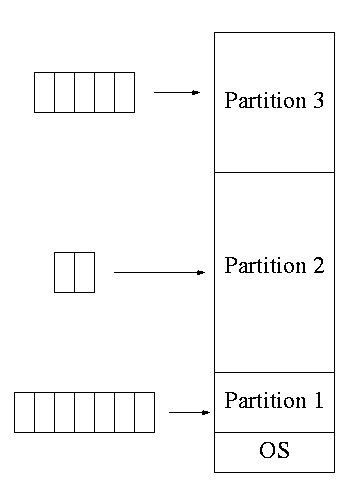

3.1.3: Multiprogramming with fixed partitions

- This was used by IBM for system 360 OS/MFT (multiprogramming with a

fixed number of tasks).

- Can have a single input queue instead of one for each partition.

- So that if there are no big jobs can use big partition for

little jobs.

- But I don't think IBM did this.

- Can think of the input queue(s) as the ready list(s) with a

scheduling policy of FCFS in each partition.

- The partition boundaries are not movable (must reboot to

move a job).

- MFT can have large internal fragmentation,

i.e., wasted space inside a region

- Each process has a single ``segment'' (we will discuss segments later)

- No sharing between process.

- No dynamic address translation.

- At load time must ``establish addressability''.

- i.e. must set a base register to the location at which the

process was loaded (the bottom of the partition).

- The base register is part of the programmer visible register set.

- This is an example of address translation during load time.

- Also called relocation.

- Storage keys are adequate for protection (IBM method).

- Alternative protection method is base/limit registers.

- An advantage of base/limit is that it is easier to move a job.

- But MFT didn't move jobs so this disadvantage of storage keys is moot.

- Tanenbaum says jobs were ``run to completion''. This must be

wrong as that would mean monoprogramming.

- He probably means that jobs not swapped out and each queue is FCFS

without preemption.