G22.2250: Operating Systems

2000-2001 Fall

Mon 5-7

Ciww 109

Allan Gottlieb

gottlieb@nyu.edu

http://allan.ultra.nyu.edu/~gottlieb

715 Broadway, Room 1001

212-998-3344

609-951-2707

email is best

Administrivia

Web Pages

There is a web page for the course. You can find it from my home page.

- Can find these notes there.

Let me know if you can't find it.

- They will be updated as bugs are found.

- Will also have each lecture available as a separate page. I will

produce the page after the lecture is given. These individual pages

might not get updated.

Textbook

Text is Tanenbaum, "Modern Operating Systems".

- Available in bookstore.

- We will do part 1, starting with chapter 1.

A Grade of ``Incomplete''

It is university policy that a student's request for an incomplete

be granted only in exceptional circumstances and only if applied for

in advance. Of course the application must be before the final exam.

Computer Accounts and Mailman mailing list

- You are entitled to a computer account, get it.

- Sign up for a Mailman mailing list for the course.

http://www.cs.nyu.edu/mailman/listinfo/g22_2250_001_fl00

- If you want to send mail to me, use gottlieb@nyu.edu not

the mailing list.

- You may do assignments on any system you wish, but ...

- You are responsible for the machine. I extend deadlines if

the nyu machines are down, not if yours are.

- Be sure to upload your assignments to the

nyu systems.

- If somehow your assignment is misplaced by me or a grader,

we need a to have a copy ON AN NYU SYSTEM

that can be used to verify the date the lab was completed.

- When you complete a lab (and have it on an nyu system), do

not edit those files. Indeed, put the lab in a separate

directory and keep out of the directory. You do not want to

alter the dates.

Homework and Labs

I make a distinction between homework and labs.

Labs are

- Required

- Due several lectures later (date given on assignment)

- Graded and form part of your final grade

- Penalized for lateness

Homeworks are

- Optional

- Due the beginning of Next lecture

- Not accepted late

- Mostly from the book

- Collected and returned

- Can help, but not hurt, your grade

Upper left board for assignments and announcements.

Interlude on Linkers

Originally called linkage editors by IBM.

This is an example of a utility program included with an

operating system distribution. Like a compiler, it is not part of the

operating system per se, i.e. it does not run in supervisor mode.

Unlike a compiler it is OS dependent (what object/load file format is

used) and is not (normally) language dependent.

What does a Linker Do?

Link of course.

When the assembler has finished it produces an object

module that is almost runnable. There are two primary

problems that must be solved for the object module to be runnable.

Both are involved with linking (that word, again) together multiple

object modules.

- Relocating relative addresses.

- Each module (mistakenly) believes it will be loaded at

location zero (or some other fixed location). We will

use zero.

- So when there is an internal jump in the program, say jump to

location 100, this means jump to location 100 of the current

module.

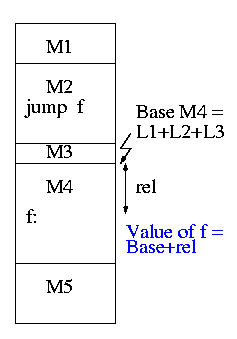

- To convert this relative address to an absolute

address, the linker adds the base address of the module

to the relative address. The base address is the address at which

this module will be loaded.

- Example: Module A is to be loaded starting at location 2300 and

contains the instruction

jump 120

The linker changes this instruction to

jump 2420

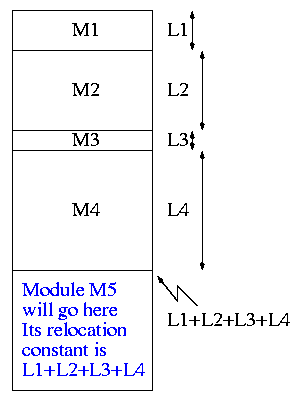

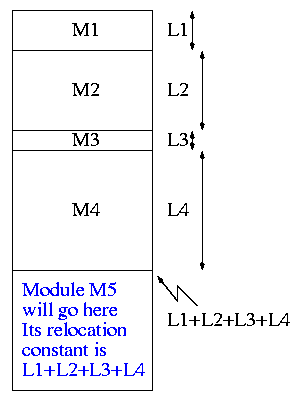

- How does the linker know that Module M5 is to be loaded starting at

location 2300?

- It processes the modules one at a time. The first module is

to be loaded at location zero. So the relocating is trivial

(adding zero). We say the relocation constant is zero.

- When finished with the first module (say M1), the linker knows

the length of M1 (say that length is L1).

- Hence the next module is to be loaded starting at L1, i.e.,

the relocation constant is L1.

- In general the linker keeps track of the sum of the lengths of

all the modules it has already processed and this is the location

at which the next module is to be loaded.

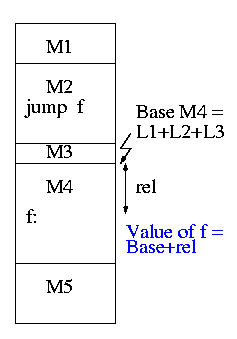

- Resolving external references.

- If a C (or Java, or Pascal) program contains a function call

f(x)

to a function f() that is compiled separately, the resulting

object module will contain some kind of jump to the beginning of

f.

- But this is impossible!

- When the C program is compiled. the compiler (and assembler)

do not know the location of f() so there is no way it

can supply the starting address.

- Instead a dummy address is supplied and a notation made that

this address needs to be filled in with the location of

f(). This is called a use of f.

- The object module containing the definition of f() indicates

that f is being defined and gives its relative address (which the

linker will convert to an absolute address). This is called a

definition of f.

The output of a linker is called a load module

because it is now ready to be loaded and run.

To see how a linker works lets consider the following example,

which is the first dataset from lab #1. The description in lab1 is

more detailed.

The target machine is word addressable and has a memory of 1000 words,

each consisting of 4 decimal digits. The first (leftmost) digit is

the opcode and the remaining three digits form an address.

Each object module contains three parts, a definition list, a use

list, and the program text itself. Each definition is a pair (sym,

loc). Each use is a pair (sym, loc). The address in loc points to

the next use or is 999 to end the chain.

For those text entries that do not form part of a use chain a fifth

(leftmost) digit is added. If it is 8, the address in the word is

relocatable. If it is 0 (and hence omitted), the address is absolute.

Sample input

1 xy 2

1 z 4

5 10043 56781 29994 80023 70024

0

1 z 3

6 80013 19994 10014 30024 10023 10102

0

1 z 1

2 50013 49994

1 z 2

1 xy 2

3 80002 19994 20014

I will illustrate a two-pass approach: The first pass simply

produces the symbol table giving the values for xy and z (2 and 15

respectively). The second pass does the real work (using the values

in the symbol table).

It is faster (less I/O) to do a one pass approach, but is harder

since you need ``fix-up code'' whenever a use occurs in a module that

precedes the module with the definition.

xy=2

z=15

+0

0: 10043 1004+0=1004

1: 56781 5678

2: xy: 29994 ->z 2015

3: 80023 8002+0=8002

4: ->z 70024 7015

+5

0 80013 8001+5=8006

1 19994 ->z 1015

2 10014 ->z 1015

3 ->z 30024 3015

4 10023 1002+5=1007

5 10102 1010

+11

0 50013 5001+11=5012

1 ->z 49994 4015

+13

0 80002 8000

1 19994 ->xy 1002

2 z:->xy 20014 2002

The linker on unix is mistakenly called ld (for loader), which is

unfortunate since it links but does not load.

Lab #1:

Implement a linker. The specific assignment is detailed on the sheet

handed out in in class and is due in two weeks, 2 October 2000 . The

content of the handout is available on the web as well (see the class

home page).

End of Interlude on Linkers

Chapter 1. Introduction

Homework: Read Chapter 1 (Introduction)

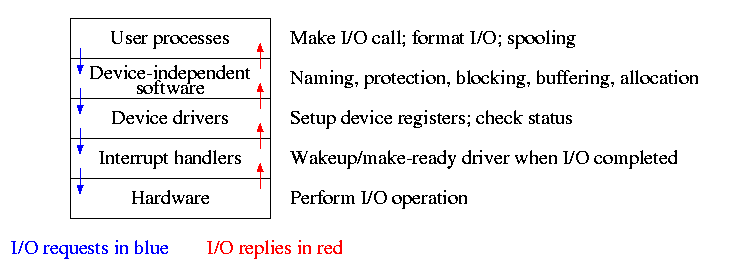

Levels of abstraction (virtual machines)

- Software (and hardware, but that is not this course) is often

implemented in layers.

- The higher layers use the facilities provided by lower layers.

- Alternatively said, the upper layers are written using a more

powerful and more abstract virtual machine than the lower layers.

- Alternatively said, each layer is written as though it runs on the

virtual machine supplied by the lower layer and in turn provides a more

abstract (pleasent) virtual machine for the higher layer to run on.

- Using a broad brush, the layers are.

- Scripts (e.g. shell scripts)

- Applications and utilities

- Libraries

- The OS proper (the kernel)

- Hardware

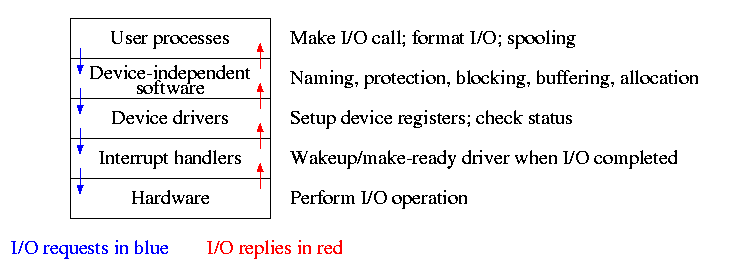

- The kernel itself is itself normally layered, e.g.

- ...

- Filesystems

- Machine independent I/O

- Machine dependent device drivers

- The machine independent I/O part is written assuming ``virtual

(i.e. idealized) hardware''. For example, the machine independent I/O

portion simply reads a block from a ``disk''. But

in reality one must deal with the specific disk controller.

- Often the machine independent part is more than one layer.

- The term OS is not well defined. Is it just the kernel? How

about the libraries? The utilities? All these are certainly

system software but not clear how much is part of the OS.

1.1: What is an operating system?

The kernel itself raises the level of abstraction and hides details.

For example a user (of the kernel) can write to a file (a concept not

present in hardware) and ignore whether the file resides on a floppy,

a CD-ROM, or a hard magnetic disk

The kernel is a resource manager (so users don't conflict).

How is an OS fundamentally different from a compiler (say)?

Answer: Concurrency! Per Brinch Hansen in Operating Systems

Principles (Prentice Hall, 1973) writes.

The main difficulty of multiprogramming is that concurrent activities

can interact in a time-dependent manner, which makes it practically

impossibly to locate programming errors by systematic testing.

Perhaps, more than anything else, this explains the difficulty of

making operating systems reliable.

Homework:1. (unless otherwise stated, problems

numbers are from the end of the chapter in Tanenbaum.)

1.2 History of Operating Systems

- Single user (no OS).

- Batch, uniprogrammed, run to completion.

- The OS now must be protected from the user program so that it is

capable of starting (and assisting) the next program in the batch).

- Multiprogrammed

- The purpose was to overlap CPU and I/O

- Multiple batches

- IBM OS/MFT (Multiprogramming with a Fixed number of Tasks)

- The (real) memory is partitioned and a batch is

assigned to a fixed partition.

- The memory assigned to a

partition does not change

- IBM OS/MVT (Multiprogramming with a Variable number of Tasks)

(then other names)

- Each job gets just the amount of memory it needs. That

is, the partitioning of memory changes as jobs enter and leave

- MVT is a more ``efficient'' user of resources but is

more difficult.

- When we study memory management, we will see that with

varying size partitions questions like compaction and

``holes'' arise.

- Time sharing

- This is multiprogramming with rapid switching between jobs

(processes). Deciding when to switch and which process to

switch to is called scheduling.

- We will study scheduling when we do processor management

- Multiple computers

- Multiprocessors: Almost from the beginning of the computer

age but now are not exotic.

- Network OS: Make use of the multiple PCs/workstations on a LAN.

- Distributed OS: A ``seamless'' version of above.

- Not part of this course (but often in G22.2251).

- Real time systems

- Often in embedded systems

- Soft vs hard real time. In the latter missing a deadline is a

fatal error--sometimes literally.

- Very important commercially, but not covered much in this course.

Homework:2, 3, 5.

1.3: Operating System Concepts

This will be very brief. Much of the rest of the course will consist in

``filling in the details''.

1.3.1: Processes

A program in execution. If you run the same program twice, you have

created two processes. For example if you have two editors running in

two windows, each instance of the editor is a separate process.

Often one distinguishes the state or context (memory image, open

files) from the thread of control. Then if one has many

threads running in the same task, the result is a

``multithreaded processes''.

The OS keeps information about all processes in the process

table. Indeed, the OS views the process as the entry. An

example of an active entity being viewed as a data structure

(cf. discrete event simulations). An observation made by Finkel in

his (out of print) OS textbook.

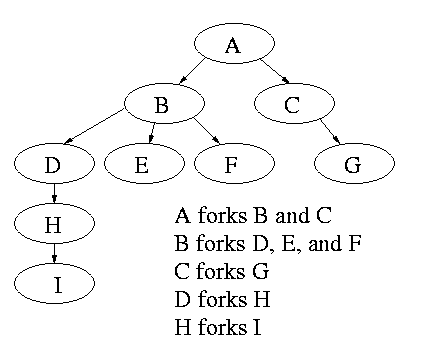

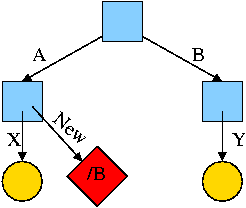

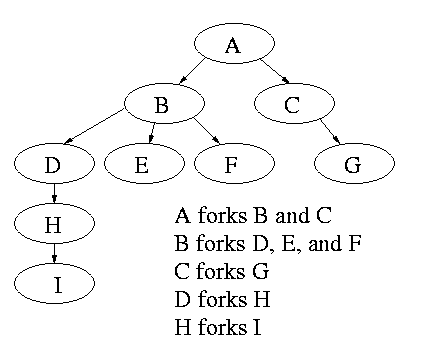

The set of processes forms a tree via the fork system call. The

forker is the parent of the forkee. If the parent stops running until

the child finishes, the ``tree'' is quite simple, just a line. But

the parent (in many OSes) is free to continue executing and in

particular is free to fork again producing another child.

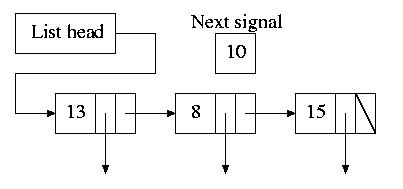

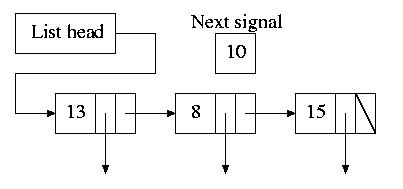

A process can send a signal to another process to cause the latter to

execute a predefined function (the signal handler).

This can be tricky to program since the programmer does not know when

in his ``main'' program the signal handler will be invoked.

1.3.2: Files

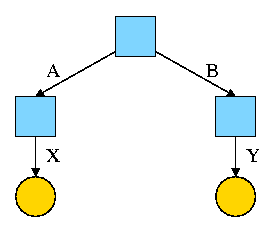

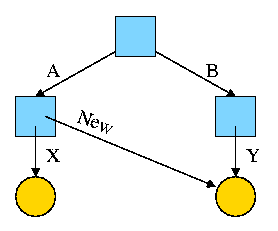

Modern systems have a hierarchy of files. A file system tree.

- In MSDOS the hierarchy is a forest not a tree. There is no file,

or directory that is an ancestor if both a:\ and c:\.

- In unix the existence of symbolic links weakens the tree to a DAG

Files and directories normally have permissions

- Normally have at least rwx.

- User, group, world

- More general is access control lists

- Often files have ``attributes'' as well. For example the linux ext2

filesystem supports a ``d'' attribute that is a hint to

the dump program not to backup this file.

- When a file is opened, permissions are checked and, if the open is

permitted, a file descriptor is

returned that is used for subsequent operations

Devices (mouse, tape drive, cdrom) are often view as ``special

files''. In a unix system these are normally found in the /dev

directory. Often utilities that are normally applied to (ordinary)

files can be applied as well to some special files. For example, when

you are accessing a unix system and do not have anything serious going

on (e.g., right after you log in), type the following command

cat /dev/mouse

and then move the mouse. You kill the cat by typing cntl-C. I tried

this on my linux box and no damage occurred. Your mileage may vary.

Many systems have standard files that are automatically made available

to a process upon startup. There (initial) file descriptors are fixed

- standard input: fd=0

- standard output: fd=1

- standard error: fd=2

A convenience offered by some command interpretors is a pipe or

pipeline. The pipeline

ls | wc

will give the number of files in the directory (plus other info).

================ Start Lecture #2

================

Note:

Lab 1 is assigned and due 2 October.

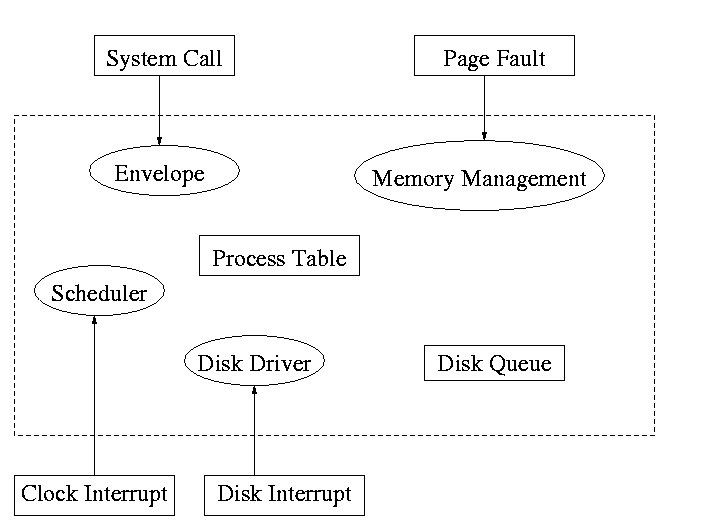

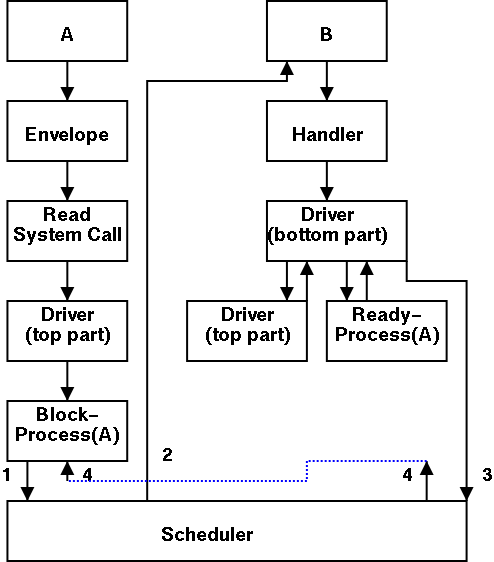

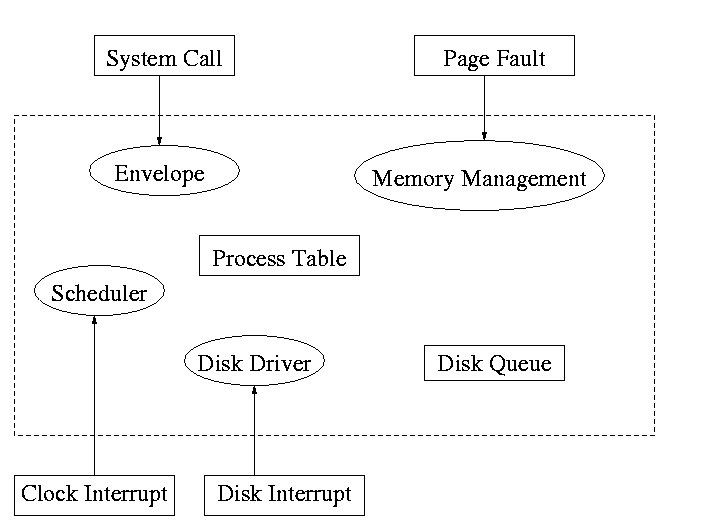

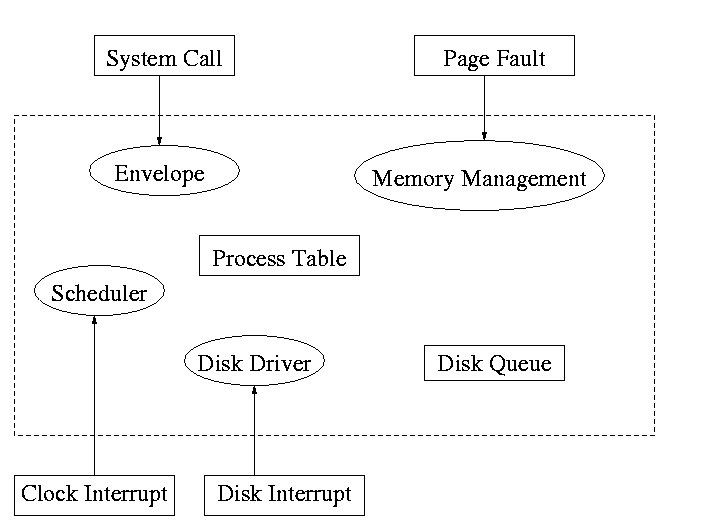

1.3.3: System Calls

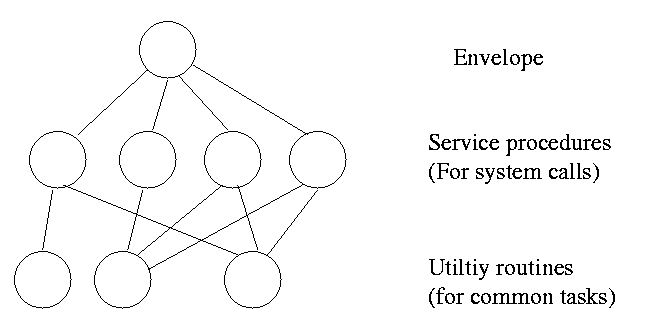

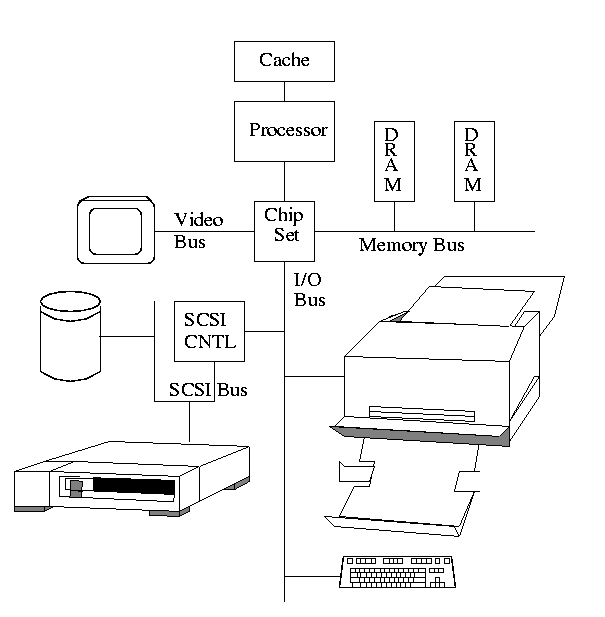

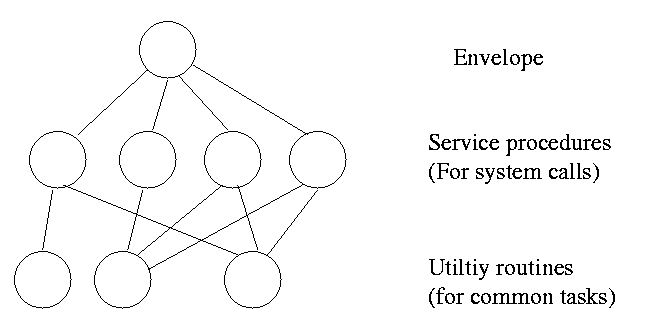

System calls are the way a user (i.e. a program)

directly interfaces with the OS. Some textbooks use the term

envelope for the component of the OS responsible for fielding

system calls and dispatching them. Here is a picture showing some of

the OS components and the external events for which they are the

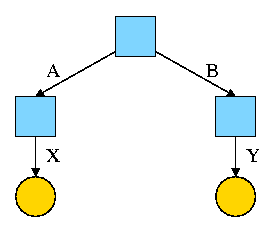

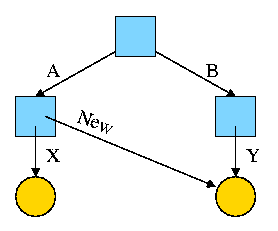

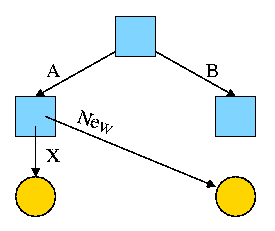

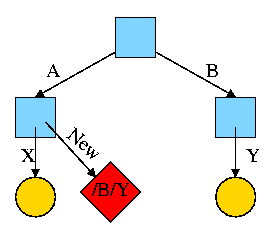

interface.

Note that the OS serves two masters. The hardware (below)

asynchronously sends interrupts and the user makes system

calls and generates page faults.

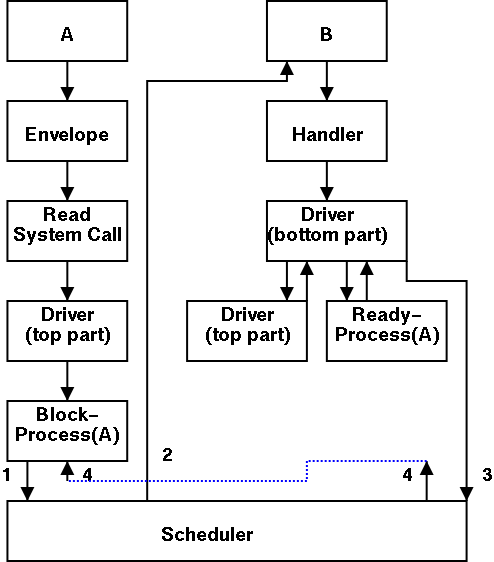

What happens when a user executes a system call such as read()?

We discuss this in much more detail later but briefly what happens is

- Normal function call (in C, ada, etc.).

- Library routine (in C).

- Small assembler routine.

- Move arguments to predefined place (perhaps registers).

- Poof (a trap instruction) and then the OS proper runs in

supervisor mode.

- Fixup result (move to correct place).

Homework: 6

1.3.4: The shell

Assumed knowledge

Homework: 9.

1.4: OS Structure

I must note that tanenbaum is a big advocate of the so called

microkernel approach in which as much as possible is moved out of the

(protected) microkernel into separate processes.

In the early 90s this was popular. Digital Unix (now called True64)

and Windows NT were examples. Digital Unix is based on Mach, a

research OS from Carnegie Mellon university. Lately, the growing

popularity of Linux has called into question the belief that ``all new

operating systems will be microkernel based''.

1.4.1: Monolithic approach

The previous picture: one big program

The system switches from user mode to kernel mode during the poof and

then back when the OS does a ``return''.

But of course we can structure the system better, which brings us to.

1.4.2: Layered Systems

Some systems have more layers and are more strictly structured.

An early layered system was ``THE'' operating system by Dijkstra. The

layers were.

- The operator

- User programs

- I/O mgt

- Operator-process communication

- Memory and drum management

The layering was done by convention, i.e. there was no enforcement by

hardware and the entire OS is linked together as one program. This is

true of many modern OS systems as well (e.g., linux).

The multics system was layered in a more formal manner. The hardware

provided several protection layers and the OS used them. That is,

arbitrary code could not jump to or access data in a more protected layer.

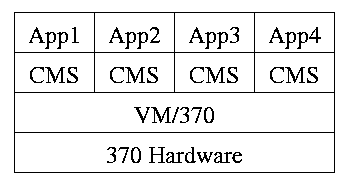

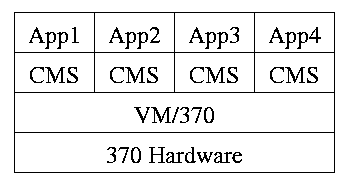

1.4.3: Virtual machines

Use a ``hypervisor'' (beyond supervisor, i.e. beyond a normal OS) to

switch between multiple Operating Systems

- Each App/CMS runs on a virtual 370

- CMS is a single user OS

- A system call in an App traps to the corresponding CMS

- CMS believes it is running on the machine so issues I/O

instructions but ...

- ... I/O instructions in CMS trap to VM/370

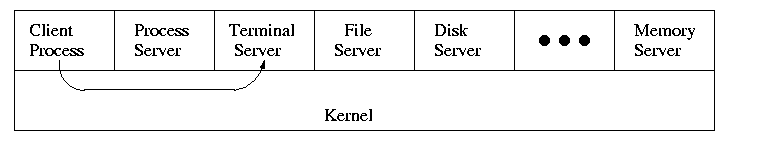

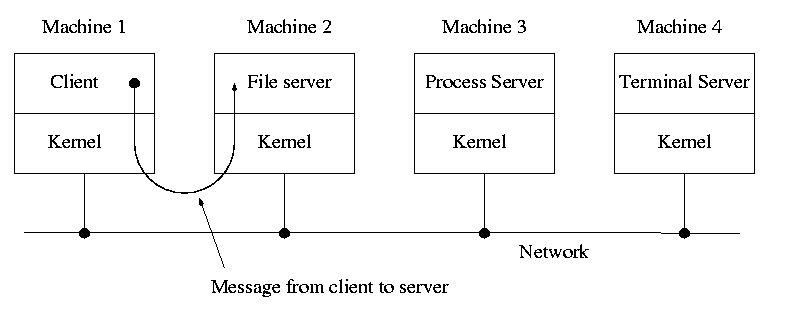

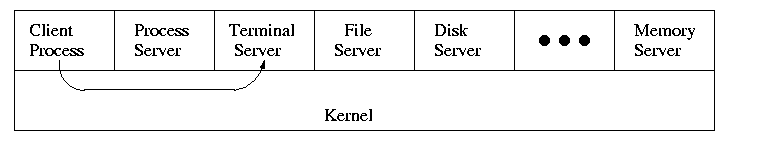

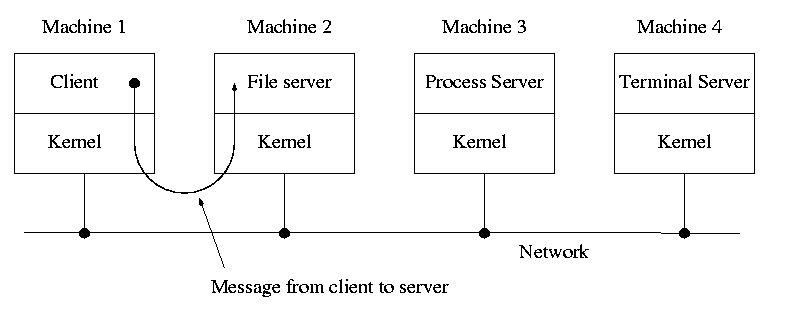

1.4.4: Client Server

When implemented on one computer, a client server OS is the

microkernel approach in which the microkernel just supplies

interprocess communication and the main OS functions are provided by a

number of separate processes.

This does have advantages. For example an error in the file server

cannot corrupt memory in the process server. This makes errors easier

to track down.

But it does mean that when a (real) user process makes a system call

there are more processes switches. These are

not free.

A distributed system can be thought of as an extension of the

client server concept where the servers are remote.

Homework: 11

Chapter 2: Process Management

Tanenbaum's chapter title is ``processes''. I prefer process

management. The subject matter is processes, process scheduling,

interrupt handling, and IPC (Interprocess communication--and

coordination).

2.1: Processes

Definition: A process is a program

in execution.

- We are assuming a multiprogramming OS that

can switch from one process to another.

- Sometimes this is

called pseudoparallelism since one has the illusion of a

parallel processor.

- The other possibility is real

parallelism in which two or more processes are actually running

at once because the computer system is a parallel processor, i.e., has

more than one processor.

- We do not study real parallelism (parallel

processing, distributed systems, multiprocessors, etc) in this course.

3.1.1: The Process Model

Even though in actuality there are many processes running at once, the

OS gives each process the illusion that it is running alone.

- Virtual time: The time used by just this

processes. Virtual time progresses at

a rate independent of other processes. Actually, this is false, the

virtual time is

typically incremented a little during systems calls used for process

switching; so if there are more other processors more ``overhead''

virtual time occurs.

- Virtual memory: The memory as viewed by the

process. Each process typically believes it has a contiguous chunk of

memory starting at location zero. Of course this can't be true of all

processes (or they would be using the same memory) and in modern

systems it is actually true of no processes (the memory assigned is

not contiguous and does not include location zero).

Virtual time and virtual memory are examples of abstractions

provided by the operating system to the user processes so that the

latter ``sees'' a more pleasant virtual machine than actually exists.

Process Hierarchies

- Modern general purpose operating systems permit a user to create and

destroy processes.

- In unix this is done by the fork

system call, which creates a child process, and the

exit system call, which terminates the current

process.

- After a fork both parent and child keep running (indeed they

have the same program text) and each can fork off other

processes.

- A process tree results. The root of the tree is a special

process created by the OS during startup.

MS-DOS is not multiprogrammed so when one process starts another,

the first process is blocked and waits until the second is finished.

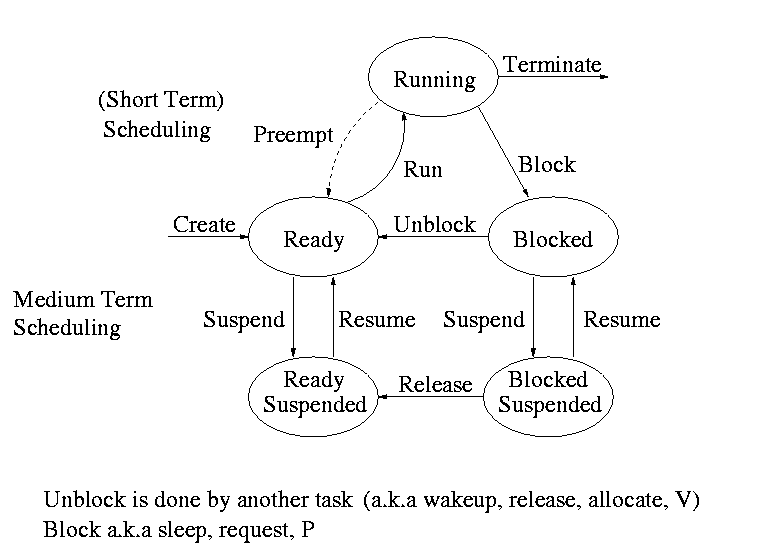

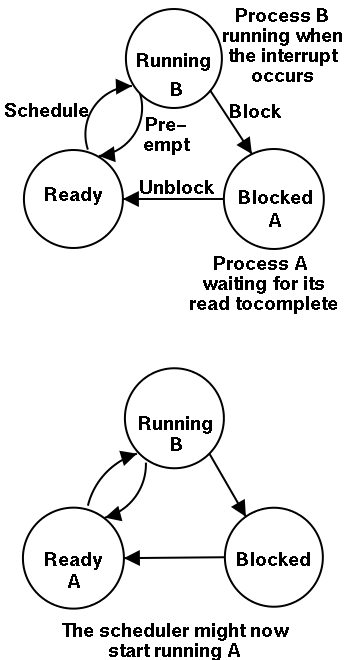

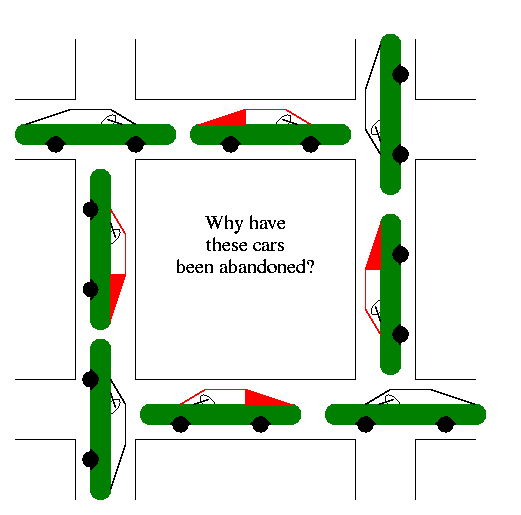

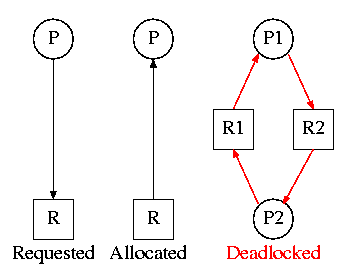

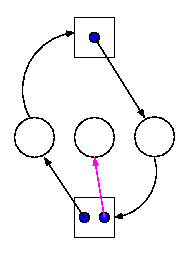

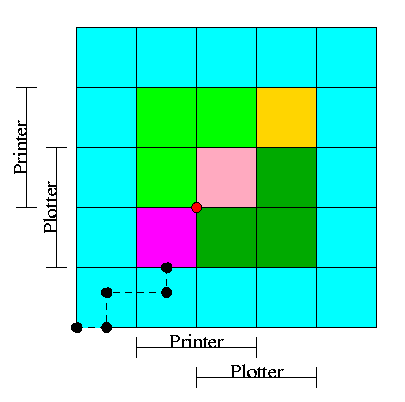

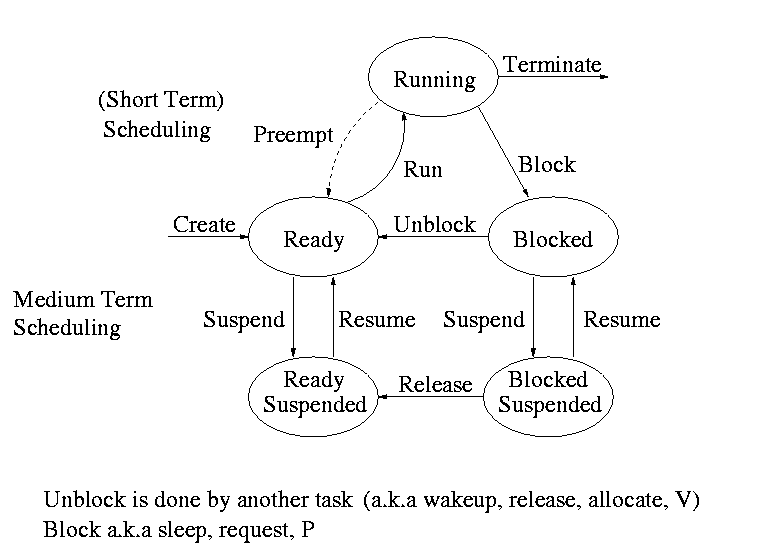

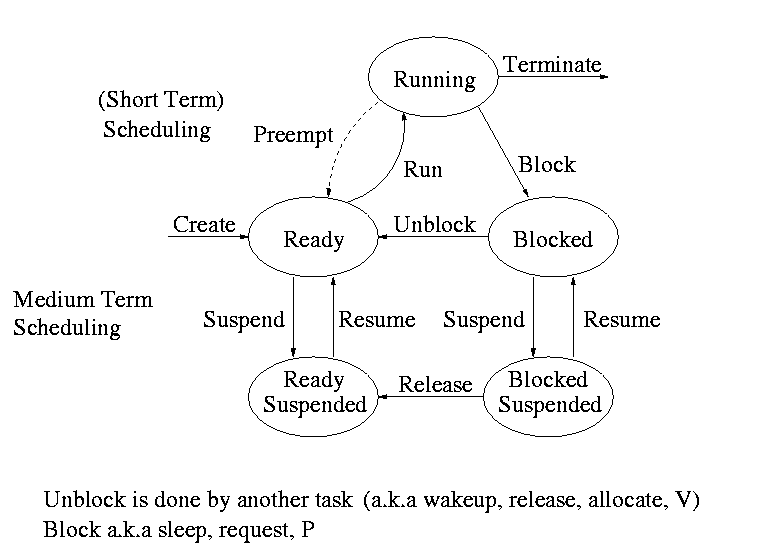

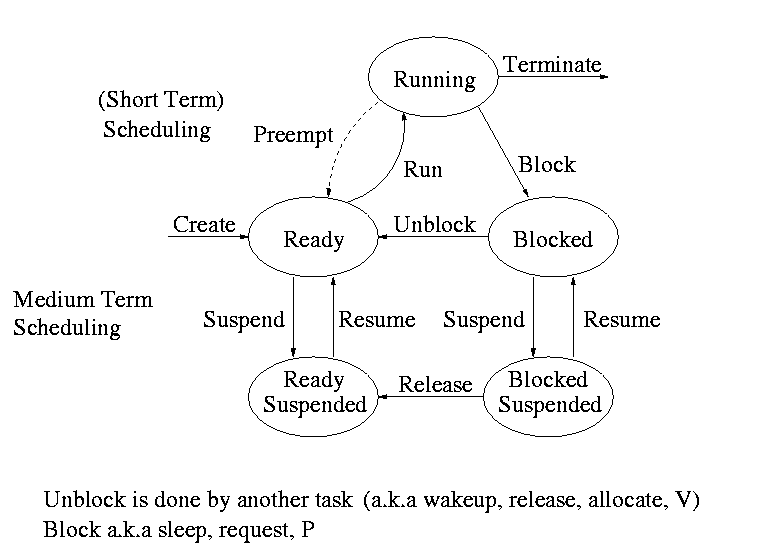

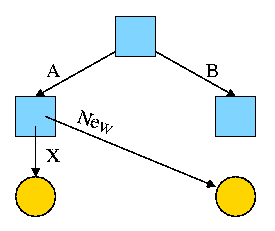

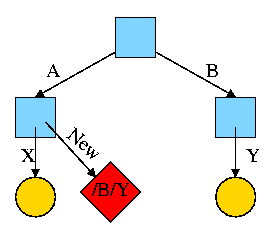

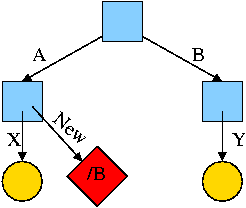

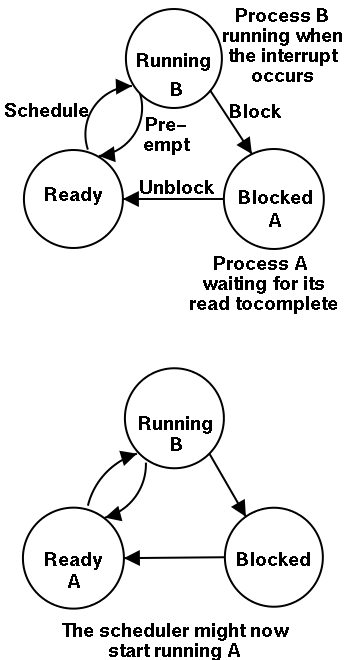

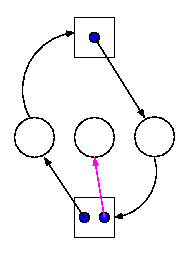

Process states and transitions

The above diagram contains a great deal of information.

- Consider a running process P that issues an I/O request

- The process blocks

- At some later point, a disk interrupt occurs and the driver

detects that P's request is satisfied.

- P is unblocked, i.e. is moved from blocked to ready

- At some later time the operating system looks for a ready job

to run and picks P.

- A preemptive scheduler has the dotted line preempt;

A non-preemptive scheduler doesn't.

- The number of processes changes only for two arcs: create and

terminate.

- Suspend and resume are medium term scheduling

- Done on a longer time scale.

- Involves memory management as well.

- Sometimes called two level scheduling.

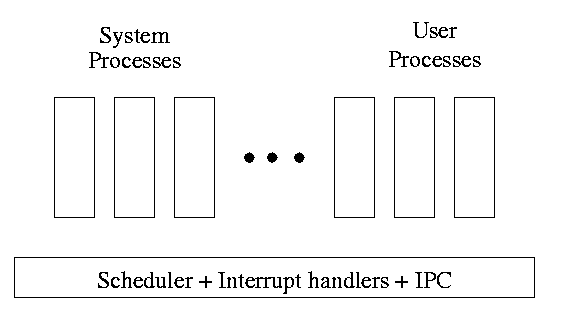

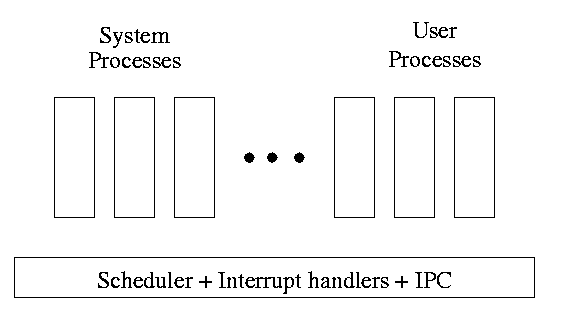

One can organize an OS around the scheduler.

- Write a minimal ``kernel'' consisting of the scheduler, interrupt

handlers, and IPC (interprocess communication)

- The rest of the OS consists of kernel processes (e.g. memory,

filesystem) that act as servers for the user processes (which of

course act as clients.

- The system processes also act as clients (of other system processes).

- The above is called the client-server model and is one Tanenbaum likes.

His ``Minix'' operating system works this way.

- Indeed, there was reason to believe that it would dominate. But that

hasn't happened.

- Such an OS is sometimes called server

based.

- Systems like traditional unix or linux would then be

called self-service since the user process serves itself.

- That is, the user process switches to kernel mode and performs

the system call.

- To repeat: the same process changes back and forth from/to

user<-->system mode and services itself.

2.1.3: Implementation of Processes

The OS organizes the data about each process in a table naturally

called the process table. Each entry in this table

is called a process table entry or PTE.

- One entry per process.

- The central data structure for process management.

- A process state transition (e.g., moving from blocked to ready) is

reflected by a change in the value of one or more

fields in the PTE.

- We have converted an active entity (process) into a data structure

(PTE). Finkel calls this the level principle ``an active

entity becomes a data structure when looked at from a lower level''.

- The PTE contains a great deal of information about the process.

For example,

- Saved value of registers when process not running

- Stack pointer

- CPU time used

- Process id (PID)

- Process id of parent (PPID)

- User id (uid and euid)

- Group id (gid and egid)

- Pointer to text segment (memory for the program text)

- Pointer to data segment

- Pointer to stack segment

- UMASK (default permissions for new files)

- Current working directory

- Many others

An aside on Interrupts

In a well defined location in memory (specified by the hardware) the

OS stores an interrupt vector, which contains the

address of the (first level) interrupt handler.

- Tanenbaum calls the interrupt handler the interrupt service routine.

- Actually one can have different priorities of interrupts and the

interrupt vector contains one pointer for each level. This is why it is

called a vector.

Assume a process P is running and a disk interrupt occurs for the

completion of a disk read previously issued by process Q, which is

currently blocked. Note that interrupts are unlikely to be for the

currently running process (because the process waiting for the

interrupt is likely blocked).

- The hardware stacks the program counter etc (possibly some

registers)

- Hardware loads new program counter from the interrupt vector.

- Loading the program counter causes a jump

- Steps 1 and 2 are similar to a procedure call. But the

interrupt is asynchronous

- Assembly language routine saves registers

- Assembly routine sets up new stack

- These last two steps can be called setting up the C environment

- Assembly routine calls C procedure (tanenbaum forgot this one)

- C procedure does the real work

- Determines what caused the interrupt (in this case a disk

completed an I/O)

- How does it figure out the cause?

- Which priority interrupt was activated.

- The controller can write data in memory before the

interrupt

- The OS can read registers in the controller

- Mark process Q as ready to run.

- That is move Q to the ready list (note that again

we are viewing Q as a data structure).

- The state of Q is now ready (it was blocked before).

- The code that Q needs to run initially is likely to be OS

code. For example, Q probably needs to copy the data just

read from a kernel buffer into user space.

- Now we have at least two processes ready to run: P and Q

- The scheduler decides which process to run (P or Q or

something else). Lets assume that the decision is to run P.

- The C procedure (that did the real work in the interrupt

processing) continues and returns to the assembly code.

- Assembly language restores P's state (e.g., registers) and starts

P at the point it was when the interrupt occurred.

2.2: Interprocess Communication (IPC) and Process Coordination and

Synchronization

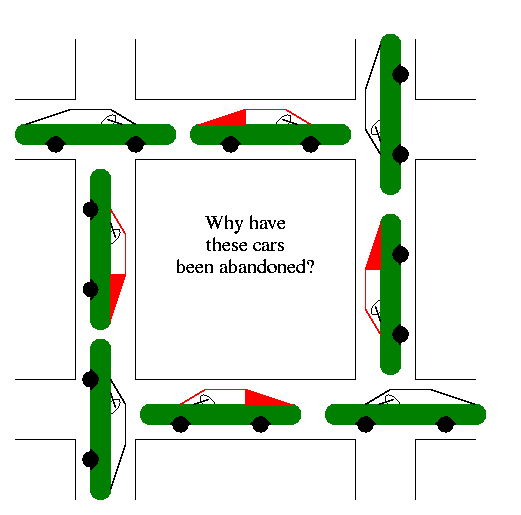

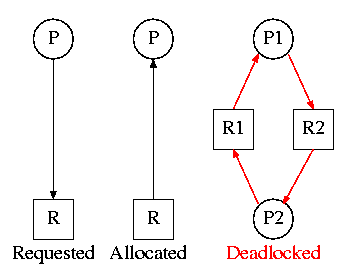

2.2.1: Race Conditions

A race condition occurs when two processes can

interact and the outcome depends on the order in which the processes

execute.

- Imagine two processes both accessing x, which is initially 10.

- One process is to execute x <-- x+1

- The other is to execute x <-- x-1

- When both are finished x should be 10

- But we might get 9 and might get 11!

- Show how this can happen (x <-- x+1 is not atomic)

- Tanenbaum shows how this can lead to disaster for a printer

spooler

Homework: 2

2.2.2: Critical sections

We must prevent interleaving sections of code that need to be atomic with

respect to each other. That is, the conflicting sections need

mutual exclusion. If process A is executing its

critical section, it excludes process B from executing its critical

section. Conversely if process B is executing is critical section, it

excludes process A from executing its critical section.

Requirements for a critical section implementation.

- No two processes may be simultaneously inside their critical

section

- No assumption may be made about the speeds or the number of CPUs

- No process outside its critical section may block other processes

- No process should have to wait forever to enter its critical

section

- I do NOT make this last requirement.

- I just require that the system as a whole make progress (so not

all processes are blocked)

- I refer to solutions that do not satisfy tanenbaum's last

condition as unfair, but nonetheless correct, solutions

- Stronger fairness conditions can also be defined

2.2.3 Mutual exclusion with busy waiting

The operating system can choose not to preempt itself. That is, no

preemption for system processes (if the OS is client server) or for

processes running in system mode (if the OS is self service).

Forbidding preemption for system processes would prevent the problem

above where x<--x+1 not being atomic crashed the printer spooler if

the spooler is part of the OS.

But this is not adequate

- Does not work for user programs. So the Unix printer spooler would

not be helped.

- Does not prevent conflicts between the main line OS and interrupt

handlers

- This conflict could be prevented by blocking interrupts while the mail

line is in its critical section.

- Indeed, blocking interrupts is often done for exactly this reason.

- Do not want to block interrupts for too long or the system

will seem unresponsive

- Does not work if the system has several processors

- Both main lines can conflict

- One processor cannot block interrupts on the other

Software solutions for two processes

Initially P1wants=P2wants=false

Code for P1 Code for P2

Loop forever { Loop forever {

P1wants <-- true ENTRY P2wants <-- true

while (P2wants) {} ENTRY while (P1wants) {}

critical-section critical-section

P1wants <-- false EXIT P2wants <-- false

non-critical-section } non-critical-section }

Explain why this works.

But it is wrong! Why?

Let's try again. The trouble was that setting want before the

loop permitted us to get stuck. We had them in the wrong order!

Initially P1wants=P2wants=false

Code for P1 Code for P2

Loop forever { Loop forever {

while (P2wants) {} ENTRY while (P1wants) {}

P1wants <-- true ENTRY P2wants <-- true

critical-section critical-section

P1wants <-- false EXIT P2wants <-- false

non-critical-section } non-critical-section }

Explain why this works.

But it is wrong again! Why?

So let's be polite and really take turns. None of this wanting stuff.

Initially turn=1

Code for P1 Code for P2

Loop forever { Loop forever {

while (turn = 2) {} while (turn = 1) {}

critical-section critical-section

turn <-- 2 turn <-- 1

non-critical-section } non-critical-section }

This one forces alternation, so is not general enough. Specifically,

it does not satisfy condition three, which requires that no process in

its non-critical section can stop another process from entering its

critical section. With alternation, if one process is in its

non-critical section (NCS) then the other can enter the CS once but

not again.

In fact, it took years (way back when) to find a correct solution.

Many earlier ``solutions'' were found and several were published, but

all were wrong

The first true solution was found by dekker. It is very clever, but I am

skipping it (I cover it when I teach OS II). Subsequently, algorithms with

better fairness properties were found (e.g. no task has to wait for

another task to enter the CS twice).

================ Start Lecture #3

================

Note:

- Lab 1 due date extended 1 week (too many typos!). It is now due 9

October.

- Added 30 minutes to office hours 5-5:30 wed, mostly for this class.

- Will do some more on lab1 in about 10 minutes.

Jesse Wei-Yeh Chu writes:

> The last program text in Module no. 4 of input set 2 has only 4 digits

> (4999). Is this a typo or an intended error for our linker to catch?

Typo!

> Also for input no. 2, there is a discrepancy between the pdf file and the

> text file. Module 5 in pdf indicates both definition and use list as 00,

> whereas the text version contains only one 00.

The text version is wrong. A filter I used to save space squashed

duplicate lines (thinking they were blanks). Alas it doesn't work

here.

I fixed both errors.

End of Note.

What follows is Peterson's solution. When it was published, it was a

surprise to see such a simple soluntion. In fact Peterson gave a

solution for any number of processes. A proof that the algorithm

satisfies our properties (including a strong fairness condition) can

be found in Operating Systems Review Jan 1990, pp. 18-22.

Initially P1wants=P2wants=false and turn=1

Code for P1 Code for P2

Loop forever { Loop forever {

P1wants <-- true P2wants <-- true

turn <-- 2 turn <-- 1

while (P2wants and turn=2) {} while (P1wants and turn=1) {}

critical-section critical-section

P1wants <-- false P2wants <-- false

non-critical-section non-critical-section

Hardware assist (test and set)

TAS(b) where b is a binary variable

ATOMICALLY sets b<--true and returns the OLD value of b.

Of course it would be silly to return the new value of b since we know

the new value is true

Now implementing a critical section for any number of processes is trivial.

loop forever {

while (TAS(s)) {} ENTRY

CS

s<--false EXIT

NCS

P and V and Semaphores

Note: Tanenbaum does both busy waiting (like above)

and blocking (process switching) solutions. We will only do busy

waiting.

Homework: 3

The entry code is often called P and the exit code V (Tanenbaum only

uses P and V for blocking, but we use it for busy waiting). So the

critical section problem is to write P and V so that

loop forever

P

critical-section

V

non-critical-section

satisfies

- Mutual exclusion

- No speed assumptions

- No blocking by processes in NCS

- Forward progress (my weakened version of Tanenbaum's last condition

Note that I use indenting carefully and hence do not need (and

sometimes omit) the braces {}

A binary semaphore abstracts the TAS solution we gave

for the critical section problem.

The above code is not real, i.e., it is not an

implementation of P. It is, instead, a definition of the effect P is

to have.

To repeat: for any number of processes, the critical section problem can be

solved by

loop forever

P(S)

CS

V(S)

NCS

The only specific solution we have seen for an arbitrary number of

processes is the one just above with P(S) implemented via

test and set.

Remark: Peterson's solution requires each process to

know its processor number. The TAS soluton does not. Thus, strictly

speaking Peterson did not provide an implementation of P and V. He

did solve the critical section problem.

To solve other coordination problems we want to extend binary

semaphores.

- With binary semaphores, two consecutive Vs do not permit two

subsequent Ps to succeed (the gate cannot be doubly opened).

- We might want to limit the number of processes in the section to

3 or 4, not always just 1.

The solution to both of these shortcomings is to remove the

restriction to a binary variable and define a

generalized or counting semaphore.

- A counting semaphore S takes on non-negative integer values

- Two operations are supported

- P(S) is

while (S=0)

S--

where finding S>0 and decrementing S is atomic

- That is, wait until the gate is open (positive), then run through and

atomically close the gate one unit

- Said another way, it is not possible for two processes doing P(S)

simultaneously to both see the same positive value of S unless a V(S)

is also simultaneous.

- V(S) is simply S++

These counting semaphores can solve what I call the

semi-critical-section problem, where you premit up to k

processes in the section. When k=1 we have the original

critical-section problem.

initially S=k

loop forever

P(S)

SCS <== semi-critical-section

V(S)

NCS

Producer-consumer problem

- Two classes of processes

- Producers, which produce times and insert them into a buffer.

- Consumers, which remove items and consume them.

- What if the producer encounters a full buffer?

Answer: Block it.

- What if the consumer encounters an empty buffer?

Answer: Block it.

- Also called the bounded buffer problem.

- Another example of active entities being replaced by a data

structure when viewed at a lower level (Finkel's level principle).

Initially e=k, f=0 (counting semaphore); b=open (binary semaphore)

Producer Consumer

loop forever loop forever

produce-item P(f)

P(e) P(b); take item from buf; V(b)

P(b); add item to buf; V(b) V(e)

V(f) consume-item

- k is the size of the buffer

- e represents the number of empty buffer slots

- f represents the number of full buffer slots

- We assume the buffer itself is only serially accessible. That is,

only one operation at a time.

- This explains the P(b) V(b) around buffer operations

- I use ; and put three statements on one line to suggest that

a buffer insertion or removal is viewed as one atomic operation.

- Of course this writing style is only a convention, the

enforcement of atomicity is done by the P/V.

- The P(e), V(f) motif is used to force bounded alternation. If k=1

it gives strict alternation.

Dining Philosophers

A classical problem from Dijkstra

- 5 philosophers sitting at a round table

- Each has a plate of spaghetti

- There is a fork between each two

- Need two forks to eat

What algorithm do you use for access to the shared resource (the

forks)?

- The obvious solution (pick up right; pick up left) deadlocks.

- Big lock around everything serializes.

- Good code in the book.

The purpose of mentioning the Dining Philosophers problem without giving

the solution is to give a feel of what coordination problems are like.

The book gives others as well. We are skipping these (again this

material would be covered in a sequel course). If you are interested

look, for example, at

http://allan.ultra.nyu.edu/~gottlieb/courses/1997-98-spring/os/class-notes.html.

Homework: 14,15 (these have short answers but are

not easy).

Readers and writers

- Two classes of processes.

- Readers, which can work concurrently.

- Writers, which need exclusive access.

- Must prevent 2 writers from being concurrent.

- Must prevent a reader and a writer from being concurrent.

- Must permit readers to be concurrent when no writer is active.

- Perhaps want fairness (i.e., freedom from starvation).

- Variants

- Writer-priority readers/writers.

- Reader-priority readers/writers.

Quite useful in multiprocessor operating systems. The ``easy way

out'' is to treat all processes as writers in which case the problem

reduces to mutual exclusion (P and V). The disadvantage of the easy

way out is that you give up reader concurrency.

Again for more information see the web page referenced above.

================ Start Lecture #4

================

Note:

Announce grader assignments (show home page).

2.4: Process Scheduling

Scheduling the processor is often called ``process scheduling'' or simply

``scheduling''.

The objectives of a good scheduling policy include

- Fairness.

- Efficiency.

- Low response time (important for interactive jobs).

- Low turnaround time (important for batch jobs).

- High throughput [the above are from Tanenbaum].

- Repeatability. Dartmouth (DTSS) ``wasted cycles'' and limited

logins for repeatability.

- Fair across projects.

- ``Cheating'' in unix by using multiple processes.

- TOPS-10.

- Fair share research project.

- Degrade gracefully under load.

Recall the basic diagram describing process states

For now we are discussing short-term scheduling, i.e., the arcs

connecting running <--> ready.

Medium term scheduling is discussed later.

Preemption

It is important to distinguish preemptive from non-preemptive

scheduling algorithms.

- Preemption means the operating system moves a process from running

to ready without the process requesting it.

- Without preemption, the system implements ``run to completion (or

yield or block)''.

- The ``preempt'' arc in the diagram.

- We do not consider yield (a solid arrow from running to ready).

- Preemption needs a clock interrupt (or equivalent).

- Preemption is needed to guarantee fairness.

- Found in all modern general purpose operating systems.

- Even non preemptive systems can be multiprogrammed (e.g., when processes

block for I/O).

Deadline scheduling

This is used for real time systems. The objective of the scheduler is

to find a schedule for all the tasks (there are a fixed set of tasks)

so that each meets its deadline. The run time of each task is known

in advance.

Actually it is more complicated.

- Periodic tasks

- What if we can't schedule all task so that each meets its deadline

(i.e., what should be the penalty function)?

- What if the run-time is not constant but has a known probability

distribution?

We do not cover deadline scheduling in this course.

The name game

There is an amazing inconsistency in naming the different

(short-term) scheduling algorithms. Over the years I have used

primarily 4 books: In chronological order they are Finkel, Deitel,

Silberschatz, and Tanenbaum. The table just below illustrates the

name game for these four books. After the table we discuss each

scheduling policy in turn.

Finkel Deitel Silbershatz Tanenbaum

-------------------------------------

FCFS FIFO FCFS -- unnamed in tanenbaum

RR RR RR RR

PS ** PS PS

SRR ** SRR ** not in tanenbaum

SPN SJF SJF SJF

PSPN SRT PSJF/SRTF -- unnamed in tanenbaum

HPRN HRN ** ** not in tanenbaum

** ** MLQ ** only in silbershatz

FB MLFQ MLFQ MQ

First Come First Served (FCFS, FIFO, FCFS, --)

If the OS ``doesn't'' schedule, it still needs to store the PTEs

somewhere. If it is a queue you get FCFS. If it is a stack

(strange), you get LCFS. Perhaps you could get some sort of random

policy as well.

- Only FCFS is considered.

- The simplist scheduling policy.

- Non-preemptive.

Round Robbin (RR, RR, RR, RR)

- An important preemptive policy.

- Essentially the preemptive version of FCFS.

- The key parameter is the quantum size q.

- When a process is put into the running state a timer is set to q.

- If the timer goes off and the process is still running, the OS

preempts the process.

- This process is moved to the ready state (the

preempt arc in the diagram), where it is placed at the

rear of the ready list (a queue).

- The process at the front of the ready list is removed from

the ready list and run (i.e., moves to state running).

- When a process is created, it is placed at the rear of the ready list.

- As q gets large, RR approaches FCFS

- As q gets small, RR approaches PS (Processor Sharing, described next)

- What value of q should we choose?

- Tradeoff

- Small q makes system more responsive.

- Large q makes system more efficient since less process switching.

Homework: 9, 19, 20, 21, and the following:

Consider the set of processes in the table below.

When does each process finish if RR scheduling is used with q=1, if

q=2, if q=3, if q=100. First assume (unrealistically) that context

switch time is zero. Then assume it is .1.

Each process performs no

I/O (i.e., no process ever blocks). All times are in milliseconds.

The CPU time is the total time required for the process (excluding

context switch time). The creation

time is the time when the process is created. So P1 is created when

the problem begins and P2 is created 5 miliseconds later.

| Process | CPU Time | Creation Time |

|---|

| P1 | 20 | 0 |

| P2 | 3 | 3 |

| P3 | 2 | 5 |

Processor Sharing (PS, **, PS, PS)

Merge the ready and running states and permit all ready jobs to be run

at once. However, the processor slows down so that when n jobs are

running at once each progresses at a speed 1/n as fast as it would if

it were running alone.

- Clearly impossible as stated due to the overhead of process

switching.

- Of theoretical interest (easy to analyze).

- Approximated by RR when the quantum is small. Make

sure you understand this last point. For example,

consider the last homework assignment (with zero context switch time)

and consider q=1, q=.1, q=.01, etc.

Homework: 18.

Variants of Round Robbin

- State dependent RR

- Same as RR but q is varied dynamically depending on the state

of the system.

- Favor processes holding important resources.

- For example, non-swappable memory.

- Perhaps this should be considered medium term scheduling

since you probably do not recalculate q each time.

- External priorities: RR but a user can pay more and get

bigger q. That is one process can be given a higher priority than

another. But this is not an absolute priority, i.e., the lower priority

(i.e., less important) process does get to run, but not as much as the

high priority process.

Priority Scheduling

Each job is assigned a priority (externally, perhaps by charging

more for higher priority) and the highest priority ready job is run.

- Similar to ``External priorities'' above

- If many processes have the highest priority, use RR among them.

- Can easily starve processes (see aging below for fix).

- Can have the priorities changed dynamically to favor processes

holding important resources (similar to state dependent RR).

- Many policies can be thought of as priority scheduling in

which we run the job with the highest priority (with different notions

of priority for different policies).

Priority aging

As a job is waiting, raise its priority so eventually it will have the

maximum priority.

- This prevents starvation (assuming all jobs terminate).

- There may be many processes with the maximum priority.

- If so, use RR among those with max priority.

- Can apply priority aging to many policies, in particular to priority

scheduling described above.

Homework: 22, 23

Selfish RR (SRR, **, SRR, **)

- Preemptive.

- Perhaps it should be called ``snobbish RR''.

- ``Accepted processes'' run RR.

- Accepted process have their priority increase at rate b>=0.

- A new process starts at priority 0; its priority increases at rate a>=0.

- A new process becomes an accepted process when its priority

reaches that of an accepted process (or until there are no accepted

processes).

- Note that at any time all accepted processes have same priority.

- If b>=a, get FCFS.

- If b=0, get RR.

- If a>b>0, it is interesting.

Shortest Job First (SPN, SJF, SJF, SJF)

Sort jobs by total execution time needed and run the shortest first.

- Nonpreemptive

- First consider a static situation where all jobs are available in

the beginning, we know how long each one takes to run, and we

implement ``run-to-completion'' (i.e., we don't even switch to another

process on I/O). In this situation, SJF has the shortest average

waiting time

- Assume you have a schedule with a long job right before a

short job.

- Consider swapping the two jobs.

- This decreases the wait for

the short by the length of the long job and increases the wait of the

long job by the length of the short job.

- This decreases the total waiting time for these two.

- Hence decreases the total waiting for all jobs and hence decreases

the average waiting time as well.

- Hence, whenever a long job is right before a short job, we can

swap them and decrease the average waiting time.

- Thus the lowest average waiting time occurs when there are no

short jobs right before long jobs.

- This is SJN.

- In the more realistic case where the scheduler switches to a new

process when the currently running process blocks (say for I/O), we

should call the policy shortest next-CPU-burst first.

- The difficulty is predicting the future (i.e., knowing in advance

the time required for the job or next-CPU-burst).

Preemptive Shortest Job First (PSPN, SRT, PSJF/SRTF, --)

Preemptive version of above

- Permit a process that enters the ready list to preempt the running

process if the time for the new process (or for its next burst) is

less than the remaining time for the running process (or for

its current burst).

- It will never happen that a process in the ready list

will require less time than the remaining time for the currently

running process. Why?

Ans: When the process joined the ready list it would have started

running if the current process had more time remaining. Since

that didn't happen the current job had less time remaining and now

it has even less.

- Can starve processs that require a long burst.

- This is fixed by the standard technique.

- What is that technique?

Ans: Priority aging.

Highest Penalty Ratio Next (HPRN, HRN, **, **)

Run the process that has been ``hurt'' the most.

- For each process, let r = T/t; where T is the wall clock time this

process has been in system and t is the running time of the process to

date.

- If r=5 that the job has been running 1/5 of the time it has been

in the system.

- We call r the penalty ration and run the process having

the highest r value

- HPRN is normally defined to be non-preemptive (i.e., the system

only checks r when a burst ends), but there is an preemptive analogue

- Do not worry about a process that just enters the system (its

ratio is undefined)

- When putting process into the run state commpute the time at

which it will no longer have the highest ratio and set a timer.

- When a process is moved into the ready state, compute its ratio

and preempt if needed.

- HRN stands for highest response ratio next and means the same thing.

- This policy is another example of priority scheduling

Multilevel Queues (**, **, MLQ, **)

Put different classes of processs in different queues

- Processs do not move from one queue to another.

- Can have different policies on the different queues.

For example, might have a background (batch) queue that is FCFS and one or

more foreground queues that are RR.

- Must also have a policy among the queues.

For example, might have two queues, foreground and background, and give

the first absolute priority over the second

- Might apply aging to prevent background starvation

- But might not, i.e., no guarantee of service for background

processes. View a background process as a ``cycle soaker''.

Multilevel Feedback Queues (FB, MFQ, MLFBQ, MQ)

Many queues and processs move from queue to queue in an attempt to

dynamically separate ``batch-like'' from interactive processs.

- Run processs from the highest priority nonempty queue in a RR manner.

- When a process uses its full quanta (looks a like batch process),

move it to a lower priority queue.

- When a process doesn't use a full quanta (looks like an interactive

process), move it to a higher priority queue.

- A long process with (perhaps spurious) I/O will remain

in the upper queues.

- Might have the bottom queue FCFS

- Many variants

For example, might let process stay in top queue 1 quantum, next queue 2

quanta, next queue 4 quanta (i.e. return a process to the rear of the

same queue it was in if the quantum expires).

Theoretical Issues

Considerable theory has been developed.

- NP completeness results abound.

- Much work in queuing theory to predict performance.

- Not covered in this course.

Medium Term scheduling

Decisions made at a coarser time scale.

- Called two-level scheduling by Tanenbaum

- Suspend (swap out) some process if memory is over-committed

- Criteria for choosing a victim

- How long since previously suspended

- How much CPU time used recently

- How much memory does it use

- External priority (pay more, get swapped out less)

- We will discuss medium term scheduling again next chapter (memory

management).

Long Term Scheduling

- ``Job scheduling''. Decide when to start jobs (i.e., do not

necessarily start them when submitted.

- Force user to log out and/or block logins if over-committed.

- CTSS (an early time sharing system at MIT) did this to insure

decent interactive response time.

- Unix does this if out of processes (i.e., out of PTEs)

- ``LEM jobs during the day'' (Grumman).

- Some supercomputer sites.

================ Start Lecture #5

================

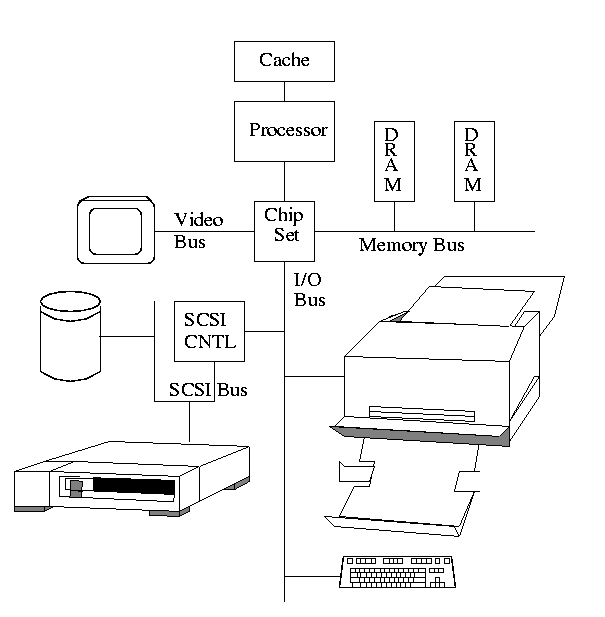

Chapter 3: Memory Management

Also called storage management or

space management.

Memory management must deal with the storage

hierarchy present in modern machines.

- Registers, cache, central memory, disk, tape (backup)

- Move data from level to level of the hierarchy.

- How should we decide when to move data up to a higher level?

- Fetch on demand (e.g. demand paging, which is dominant now).

- Prefetch

- Read-ahead for file I/O.

- Large cache lines and pages.

- Extreme example. Entire job present whenever running.

We will see in the next few lectures that there are three independent

decision:

- Segmentation (or no segmentation)

- Paging (or no paging)

- Fetch on demand (or no fetching on demand)

Memory management implements address translation.

- Convert virtual addresses to physical addresses

- Also called logical to real address translation.

- A virtual address is the address expressed in

the program.

- A physical address is the address understood

by the computer.

- The translation from virtual to physical addresses is performed by

the Memory Management Unit or (MMU).

- Another example of address translation is the conversion of

relative addresses to absolute addresses by the linker.

- The translation might be trivial (e.g., the identity) but not in a modern

general purpose OS.

- The translation might be difficult (i.e., slow).

- Often includes addition/shifts/mask--not too bad.

- Often includes memory references.

- VERY serious.

- Solution is to cache translations in a Translation

Lookaside Buffer (TLB). Sometimes called a

translation buffer (TB).

Homework: 7.

When is address translation performed?

- At compile time

- Primitive.

- Compiler generates physical addresses.

- Requires knowledge of where the compilation unit will be loaded.

- Rarely used (MSDOS .COM files).

- At link-edit time (the ``linker lab'')

- Compiler

- Generates relocatable addresses for each compilation unit.

- References external addresses.

- Linkage editor

- Converts the relocatable addr to absolute.

- Resolves external references.

- Misnamed ld by unix.

- Also converts virtual to physical addresses by knowing where the

linked program will be loaded. Linker lab ``does'' this, but

it is trivial since we assume the linked program will be

loaded at 0.

- Loader is simple.

- Hardware requirements are small.

- A program can be loaded only where specified and

cannot move once loaded.

- Not used much any more.

- At load time

- Similar to at link-edit time, but do not fix

the starting address.

- Program can be loaded anywhere.

- Program can move but cannot be split.

- Need modest hardware: base/limit registers.

- Loader sets the base/limit registers.

- At execution time

- Addresses translated dynamically during execution.

- Hardware needed to perform the virtual to physical address

translation quickly.

- Currently dominates.

- Much more information later.

Extensions

- Dynamic Loading

- When executing a call, check if module is loaded.

- If not loaded, call linking loader to load it and update

tables.

- Slows down calls (indirection) unless you rewrite code

dynamically.

- Not used much.

- Dynamic Linking

- The traditional linking described above is today often called

static linking.

- With dynamic linking, frequently used routines are not linked

into the program. Instead, just a stub is linked.

- When the routine is called, the stub checks to see if the

real routine is loaded (it may have been loaded by

another program).

- If not loaded, load it.

- If already loaded, share it. This needs some OS

help so that different jobs sharing the library don't

overwrite each other's private memory.

- Advantages of dynamic linking.

- Saves space: Routine only in memory once even when used

many times.

- Bug fix to dynamically linked library fixes all applications

that use that library, without having to

relink the application.

- Disadvantages of dynamic linking.

- New bugs in dynamically linked library infect all

applications.

- Applications ``change'' even when they haven't changed.

Note: I will place ** before each memory management

scheme.

3.1: Memory management without swapping or paging

Entire process remains in memory from start to finish.

The sum of the memory requirements of all jobs in the system cannot

exceed the size of physical memory.

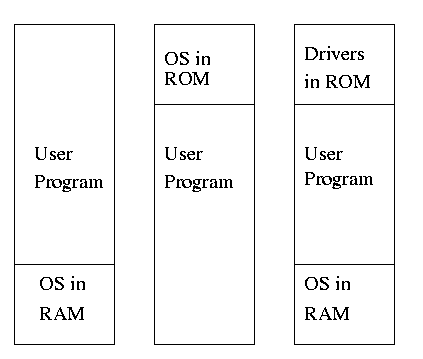

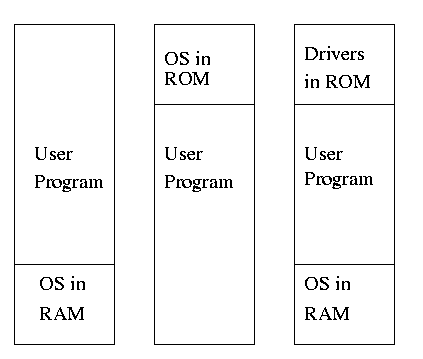

** 3.1.1: Monoprogramming without swapping or paging (Single User)

The ``good old days'' when everything was easy.

- No address translation done by the OS (i.e., address translation is

not performed dynamically during execution).

- Either reload the OS for each job (or don't have an OS, which is almost

the same), or protect the OS from the job.

- One way to protect (part of) the OS is to have it in ROM.

- Of course, must have the data in ram.

- Can have a separate OS address space only accessible in

supervisor mode.

- Might just put some drivers in ROM (BIOS).

- The user employs overlays if the memory needed

by a job exceeds the size of physical memory.

- Programmer breaks program into pieces.

- A ``root'' piece is always memory resident.

- The root contains calls to load and unload various pieces.

- Programmer's responsibility to ensure that a piece is already

loaded when it is called.

- No longer used, but we couldn't have gotten to the moon in the

60s without it (I think).

- Overlays have been replaced by dynamic address translation and

other features (e.g., demand paging) that have the system support

logical address sizes greater than physical address sizes.

- Fred Brooks (leader of IBM's OS/360 project and author of ``The

mythical man month'') remarked that the OS/360 linkage editor was

terrific, especially in its support for overlays, but by the time

it came out, overlays were no longer used.

3.1.2: Multiprogramming and Memory Usage

Goal is to improve CPU utilization, by overlapping CPU and I/O

- Consider a job that is unable to compute (i.e., it is waiting for

I/O) a fraction p of the time.

- Then, with monoprogramming, the CPU utilization is 1-p.

- Note that p is often > .5 so CPU utilization is poor.

- But, if the probability that a

job is waiting for I/O is p and n jobs are in memory, then the

probability that all n are waiting for I/O is approximately p^n.

- So, with a multiprogramming level (MPL) of n,

the CPU utilization is approximately 1-p^n.

- If p=.5 and n=4, then 1-p^n = 15/16, which is much better than

1/2, which would occur for monoprogramming (n=1).

- This is a crude model, but it is correct that increasing MPL does

increase CPU utilization up to a point.

- The limitation is memory, which is why we discuss it here

instead of process management. That is, we must have many jobs

loaded at once, which means we must have enough memory for them

There are other issues as well and we will discuss them.

- Some of the CPU utilization is time spent in the OS executing

context switches so the gains are not a great as the crude model predicts.

Homework: 1, 3.

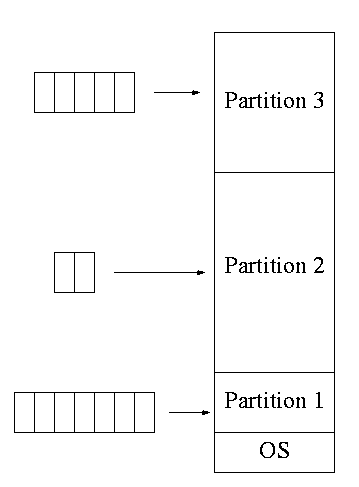

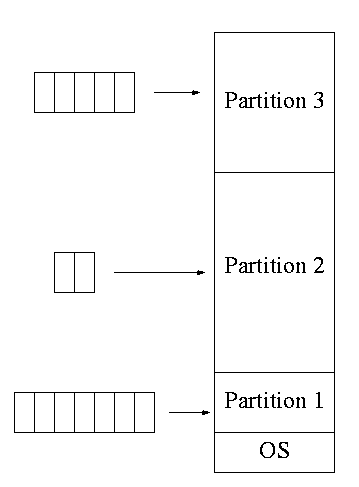

3.1.3: Multiprogramming with fixed partitions

- This was used by IBM for system 360 OS/MFT (multiprogramming with a

fixed number of tasks).

- Can have a single input queue instead of one for each partition.

- So that if there are no big jobs can use big partition for

little jobs.

- But I don't think IBM did this.

- Can think of the input queue(s) as the ready list(s) with a

scheduling policy of FCFS in each partition.

- The partition boundaries are not movable (must reboot to

move a job).

- MFT can have large internal fragmentation,

i.e., wasted space inside a region

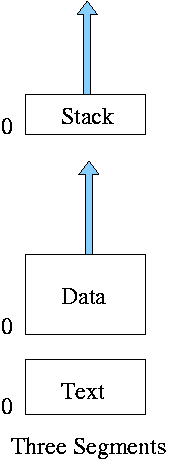

- Each process has a single ``segment'' (we will discuss segments later)

- No sharing between process.

- No dynamic address translation.

- At load time must ``establish addressability''.

- i.e. must set a base register to the location at which the

process was loaded (the bottom of the partition).

- The base register is part of the programmer visible register set.

- This is an example of address translation during load time.

- Also called relocation.

- Storage keys are adequate for protection (IBM method).

- Alternative protection method is base/limit registers.

- An advantage of base/limit is that it is easier to move a job.

- But MFT didn't move jobs so this disadvantage of storage keys is moot.

- Tanenbaum says jobs were ``run to completion''. This must be

wrong as that would mean monoprogramming.

- He probably means that jobs not swapped out and each queue is FCFS

without preemption.

3.2: Swapping

Moving entire processes between disk and memory is called

swapping.

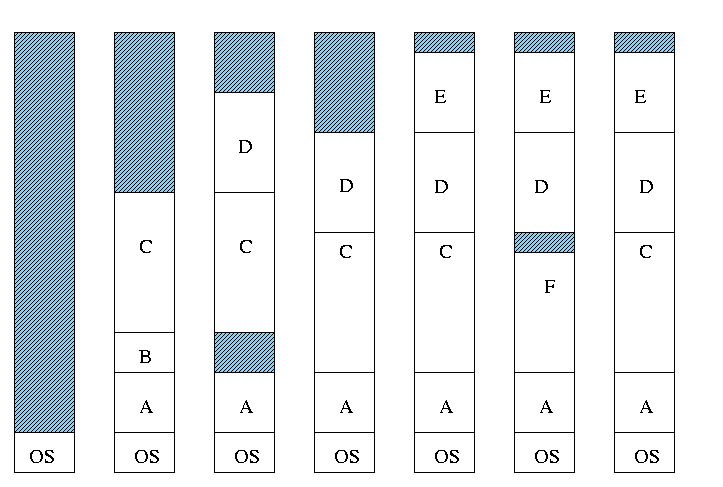

3.2.1: Multiprogramming with variable partitions

- Both the number and size of the partitions change with time.

- IBM OS/MVT (multiprogramming with a varying number of tasks).

- Also early PDP-10 OS.

- Job still has only one segment (as with MFT) but now can be of any

size up to the size of the machine and can change with time.

- A single ready list.

- Job can move (might be swapped back in a different place).

- This is dynamic address translation (during run time).

- Must perform an addition on every memory reference (i.e. on every

address translation) to add the start address of the partition.

- Called a DAT (dynamic address translation) box by IBM.

- Eliminates internal fragmentation.

- Find a region the exact right size (leave a hole for the

remainder).

- Not quite true, can't get a piece with 10A755 bytes. Would

get say 10A760. But internal fragmentation is much

reduced compared to MFT. Indeed, we say that internal

fragmentation has been eliminated.

- Introduces external fragmentation, i.e., holes

outside any region.

- What do you do if no hole is big enough for the request?

- Can compactify

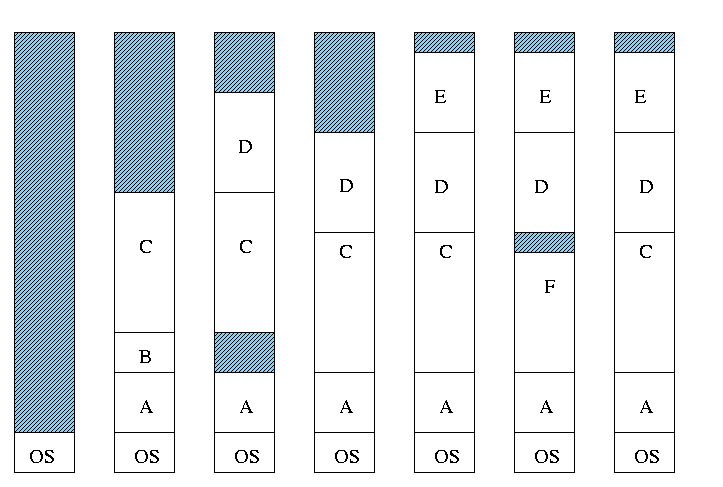

- Transition from bar 3 to bar 4 in diagram below.

- This is expensive.

- Not suitable for real time (MIT ping pong).

- Can swap out one process to bring in another

- Bars 5-6 and 6-7 in diagram

Homework: 4

- There are more processes than holes. Why?

- Because next to a process there might be a process or a hole

but next to a hole there must be a process

- So can have ``runs'' of processes but not of holes

- Above actually shows that there are about twice as many

processes as holes.

- Base and limit registers are used

- Storage keys not good since compactifying would require

changing many keys.

- Storage keys might need a fine granularity to permit the

boundaries move by small amounts. Hence many keys would need to be

changed

MVT Introduces the ``Placement Question'', which hole (partition)

to choose

- Best fit, worst fit, first fit, circular first fit, quick fit, Buddy

- Best fit doesn't waste big holes, but does leave slivers and

is expensive to run.

- Worst fit avoids slivers, but eliminates all big holes so a

big job will require compaction. Even more expensive than best

fit (best fit stops if it finds a perfect fit).

- Quick fit keeps lists of some common sizes (but has other

problems, see Tanenbaum).

- Buddy system

- Round request to next highest power of two (causes

internal fragmentation).

- Look in list of blocks this size (as with quick fit).

- If list empty, go higher and split into buddies.

- When returning coalesce with buddy.

- Do splitting and coalescing recursively, i.e. keep

coalescing until can't and keep splitting until successful.

- See Tanenbaum for more details (or an algorithms book).

- A current favorite is circular first fit (also know as next fit)

- Use the first hole that is big enough (first fit) but start

looking where you left off last time.

- Doesn't waste time constantly trying to use small holes that

have failed before, but does tend to use many of the big holes,

which can be a problem.

- Buddy comes with its own implementation. How about the others?

- Bit map

- Only question is how much memory does one bit represent.

- Big: Serious internal fragmentation

- Small: Many bits to store and process

- Linked list

- Each item on list says whether Hole or Process, length,

starting location

- The items on the list are not taken from the memory to be

used by processes

- Keep in order of starting address

- Double linked

- Boundary tag

- Knuth

- Use the same memory for list items as for processes

- Don't need an entry in linked list for blocks in use, just

the avail blocks are linked

- For the blocks currently in use, just need a hole/process bit at

each end and the length. Keep this in the block itself.

- See knuth, the art of computer programming vol 1

Homework: 2, 5.

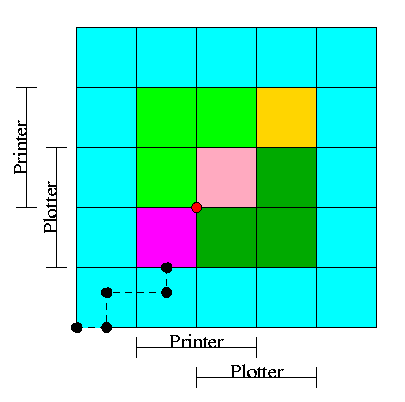

MVT Also introduces the ``Replacement Question'', which victim to

swap out

We will study this question more when we discuss

demand paging

Considerations in choosing a victim

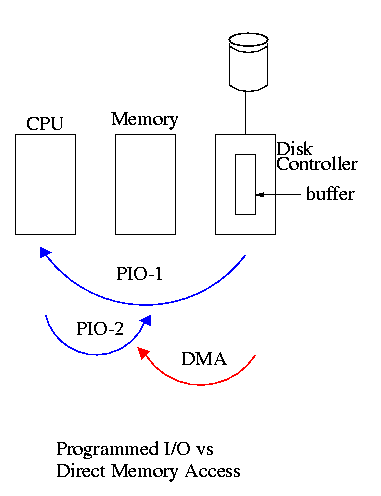

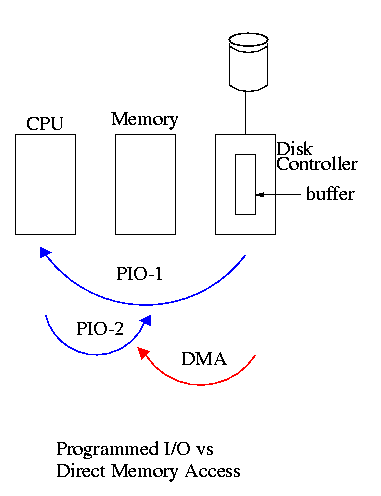

- Cannot replace a job that is pinned,

i.e. whose memory is tied down. For example, if Direct Memory

Access (DMA) I/O is scheduled for this process, the job is pinned

until the DMA is complete.

- Victim selection is a medium term scheduling decision

- Job that has been in a wait state for a long time is a good candidate.

- Often choose as a victim a job that has been in memory for a long

time.

- Another point is how long should it stay swapped out.

- For demand paging, where swaping out a page is not as drastic as

swapping out a job, choosing the victim is an important memory

management decision and we shall study several policies,

NOTEs:

- So far the schemes presented have had two properties:

- Each job is stored contiguously in memory. That is, the job is

contiguous in physical addresses.

- Each job cannot use more memory than exists in the system. That

is, the virtual addresses space cannot exceed the physical address

space.

- Tanenbaum now attacks the second item. I wish to do both and start

with the first

- Tanenbaum (and most of the world) uses the term ``paging'' to mean

what I call demand paging. This is unfortunate as it mixes together

two concepts

- Paging (dicing the address space) to solve the placement

problem and essentially eliminate external fragmentation.

- Demand fetching, to permit the total memory requirements of

all loaded jobs to exceed the size of physical memory.

- Tanenbaum (and most of the world) uses the term virtual memory as

a synonym for demand paging. Again I consider this unfortunate.

- Demand paging is a fine term and is quite descriptive

- Virtual memory ``should'' be used in contrast with physical

memory to describe any virtual to physical address translation.

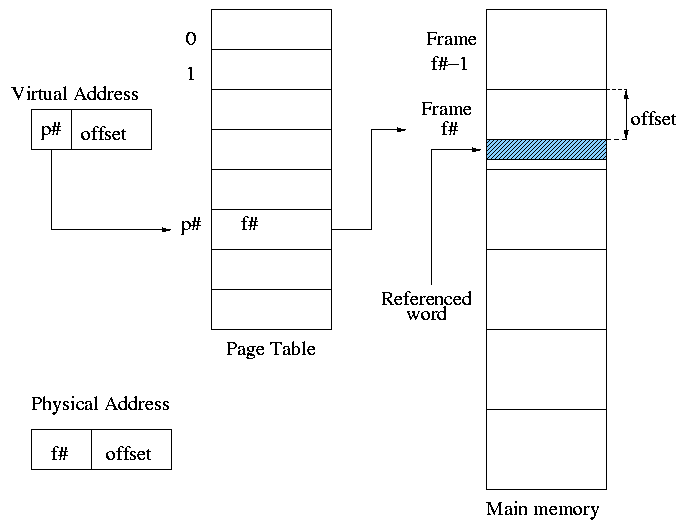

** (non-demand) Paging

Simplest scheme to remove the requirement of contiguous physical

memory.

- Chop the program into fixed size pieces called

pages (invisible to the programmer).

- Chop the real memory into fixed size pieces called page

frames or simply frames.

- Size of a page (the page size) = size of a frame (the frame size).

- Sprinkle the pages into the frames.

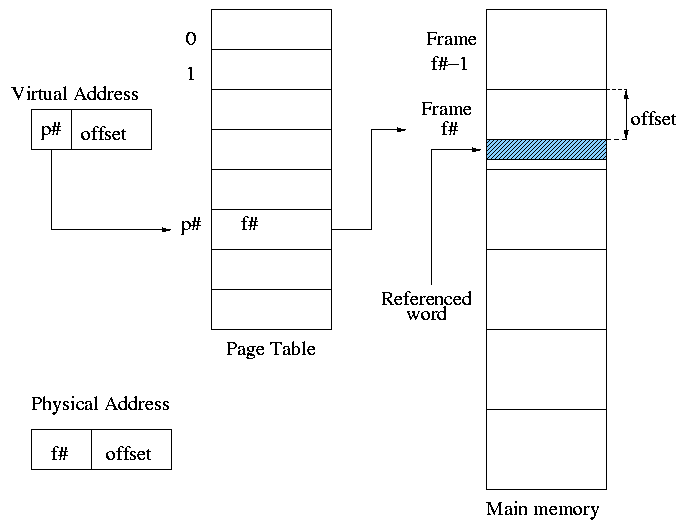

- Keep a table (called the page table) having an

entry for each page. The page table entry or PTE for

page p contains the number of the frame f that contains page p.

Example: Assume a decimal machine with

page size = frame size = 1000.

Assume PTE 3 contains 459.

Then virtual address 3372 corresponds to physical address 459372.

Properties of (non-demand) paging.

- Entire job must be memory resident to run.

- No holes, i.e. no external fragmentation.

- If there are 50 frames available and the page size is 4KB than a

job requiring <= 200KB will fit, even if the available frames are

scattered over memory.

- Hence (non-demand) paging is useful.

- Introduces internal fragmentation approximately equal to 1/2 the

page size for every process (really every segment).

- Can have a job unable to run due to insufficient memory and

have some (but not enough) memory available. This is not

called external fragmentation since it is not due to memory being fragmented.

- Eliminates the placement question. All pages are equally

good since don't have external fragmentation.

- Replacement question remains.

- Since page boundaries occur at ``random'' points and can change from

run to run (the page size can change with no effect on the

program--other than performance), pages are not appropriate units of

memory to use for protection and sharing. This is discussed further

when we introduce segmentation.

Homework: 13

================ Start Lecture #6

================

Notes:

Lab 2 due 6 November, but lab3 will be handed out

30 October, so you may want to finish lab 2 early. Lab 2 is being

handed out 16 October and is available on the web.

It is NOW DEFINITE that on monday 23 Oct, my office

hours will have to move from 2:30--3:30 to 1:30-2:30 due to a

departmental committee meeting.

End of Notes:

Address translation

- Each memory reference turns into 2 memory references

- Reference the page table

- Reference central memory

- This would be a disaster!

- Hence the MMU caches page#-->frame# translations. This cache is kept

near the processor and can be accessed rapidly.

- This cache is called a translation lookaside buffer (TLB) or

translation buffer (TB).

- For the above example, after referencing virtual address 3372,

entry 3 in the TLB would contain 459.

- Hence a subsequent access to virtual address 3881 would be

translated to physical address 459881 without a memory reference.

Choice of page size is discuss below.

Homework: 8.

3.3: Virtual Memory (meaning fetch on demand)

Idea is that a program can execute even if only the active portion of its

address space is memory resident. That is, swap in and swap out

portions of a program. In a crude sense this can be called

``automatic overlays''.

Advantages

- Can run a program larger than the total physical memory.

- Can increase the multiprogramming level since the total size of

the active, i.e. loaded, programs (running + ready + blocked) can

exceed the size of the physical memory.

- Since some portions of a program are rarely if ever used, it is

an inefficient use of memory to have them loaded all the time. Fetch on

demand will not load them if not used and will unload them during

replacement if they are not used for a long time (hopefully).

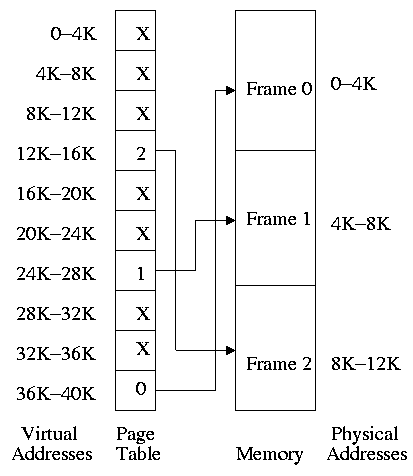

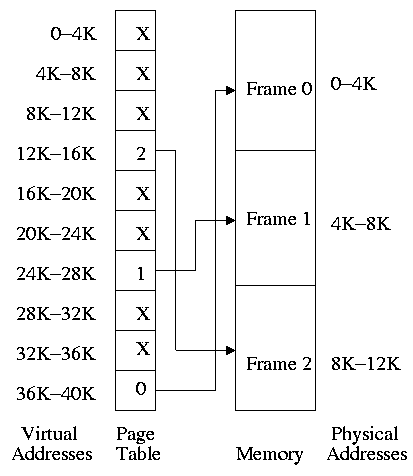

3.2.1: Paging (meaning demand paging)

Fetch pages from disk to memory when they are referenced, with a hope

of getting the most actively used pages in memory.

- Very common: dominates modern operating systems

- Started by the Atlas system at Manchester University in the 60s

(Fortheringham).

- Each PTE continues to have the frame number if the page is

loaded.

- But what if the page is not loaded (exists only on disk)?

- The PTE has a flag indicating if it is loaded (can think of

the X in the diagram on the right as indicating that this flag is

not set).

- If not loaded, the location on disk could be kept in the PTE

(not shown in the diagram). But normally it is not

(discussed below).

- When a reference is made to a non-loaded page (sometimes

called a non-existent page, but that is a bad name), the system

has a lot of work to do. We give more details

below.

- Choose a free frame if one exists

- If not

- Choose a victim frame.

- More later on how to choose a victim.

- Called the replacement question

- Write victim back to disk if dirty,

- Update the victim PTE to show that it is not loaded

and where on disk it has been put (perhaps the disk

location is already there).

- Copy the referenced page from disk to the free frame.

- Update the PTE of the referenced page to show that it is

loaded and give the frame number.

- Do the standard paging address translation (p#,off)-->(f#,off).

- Really not done quite this way

- There is ``always'' a free frame because ...

- There is a deamon active that checks the number of free frames

and if this is too low, chooses victims and ``pages them out''

(writing them back to disk if dirty).

- Choice of page size is discussed below.

Homework: 11.

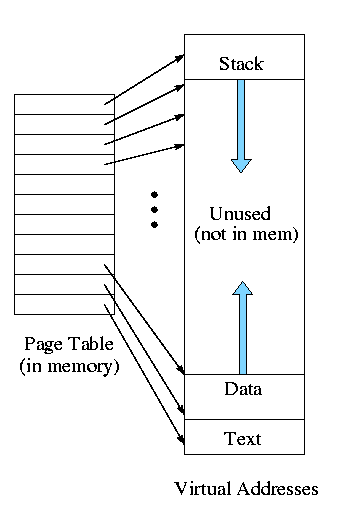

3.3.2: Page tables

A discussion of page tables is also appropriate for (non-demand)

paging, but the issues are more acute with demand paging since the

tables can be much larger. Why?

- The total size of the active processes is no longer limited to

the size of physical memory. Since the total size of the processes is

greater, the total size of the page tables is greater and hence

concerns over the size of the page table are more acute.

- With demand paging an important question is the choice of a victim

page to page out. Data in the page table

can be useful in this choice.

We must be able access to the page table very quickly since it is

needed for every memory access.

Unfortunate laws of hardware.

- Big and fast are essentially incompatible.

- Big and fast and low cost is hopeless.

So we can't just say, put the page table in fast processor registers,

and let it be huge, and sell the system for $1500.

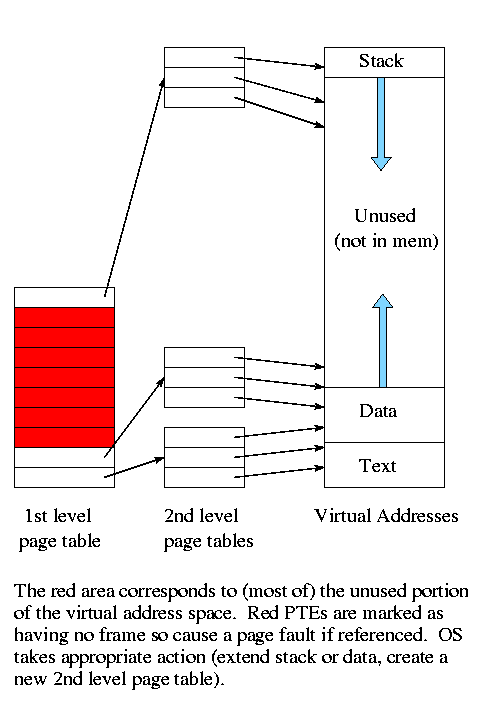

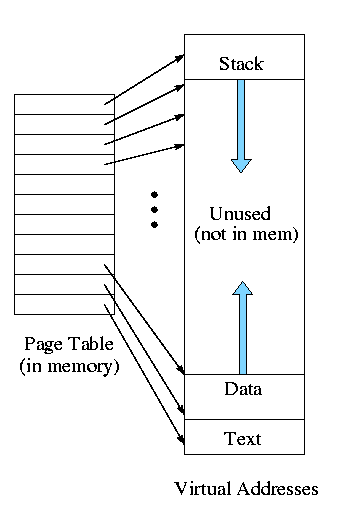

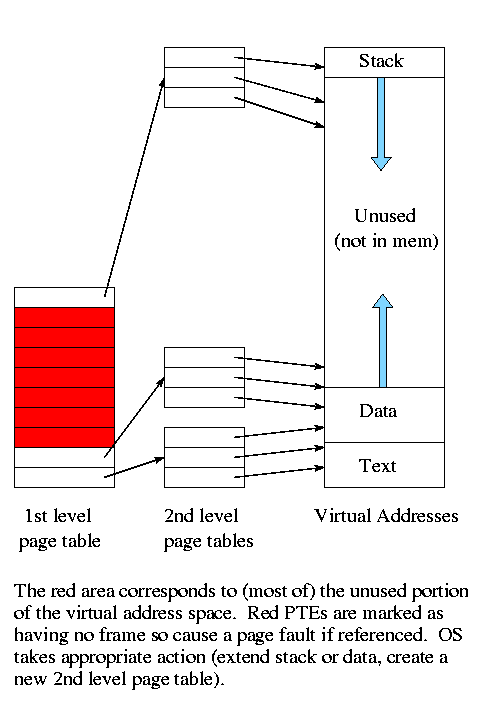

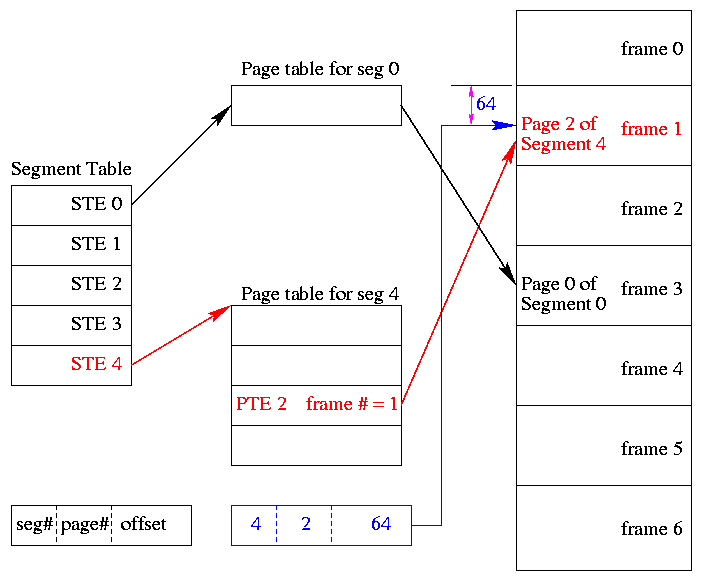

For now, put the (one-level) page table in main memory.